UPDATE: What the Anthropic Blacklist Means for How You Use AI in 2026

Before AI does something in your name, you need to know this principle

In early March 2026, Anthropic was blacklisted from U.S. federal contracts after refusing Pentagon demands to allow Claude in mass surveillance and autonomous weapons targeting. The leadership lesson has nothing to do with politics: AI does not transfer accountability. Whatever AI produces under your name, you own it. The Disclosure Test is a practical guardrail every leader can apply today.

Somewhere between reading the Anthropic headline and finishing my coffee that morning, something clicked in my mind about the stance they took at the end of February and what it means to me as a leader.

I’ve been using Claude as my primary AI tool for almost a year now, not out of habit, and not because of marketing. I pay attention to what a company actually says about how its AI can and cannot be used. Anthropic has always been specific about its limits, and I knew they were willing to lose business over them.

Here is their homepage headline currently (March, 2026):

In late February 2026, they proved it.

I didn’t know yet what that story would teach me about my own work. But I should have.

In this article, you’ll learn:

Why AI accountability stays with you, not the tool, regardless of what went wrong

The one question I ask before using AI in any part of my workflow

How to set your own AI guardrails before the moment forces the decision

What Happened Between Anthropic and the Pentagon

The U.S. Department of Defense demanded that Anthropic loosen the safeguards on Claude to allow mass surveillance of Americans and autonomous weapons targeting. Anthropic refused. On February 27, 2026, the Pentagon formally designated Anthropic a supply-chain risk “effective immediately,” and President Trump directed all federal agencies to cease using Anthropic’s technology. (NPR, February 27, 2026 | Washington Post, February 27, 2026)

Anthropic sued. More than 30 employees from OpenAI and Google DeepMind publicly backed Anthropic’s position. (TechCrunch, March 9, 2026) OpenAI, for its part, announced its Pentagon deal the same day Anthropic was blacklisted. (NPR, February 27, 2026)

What happened next surprised a lot of people. The public sided with Anthropic.

Within days of the blacklist, Claude surged past ChatGPT to become the number one free app in Apple’s App Store in more than 20 countries. (Fortune, March 2, 2026 | Axios, March 1, 2026) Anthropic reported more than one million new signups per day. Claude’s daily active users hit 11.3 million by March 2, up 183% from roughly 4 million at the start of the year. (TechCrunch, March 6, 2026)

I am not here to argue who made the right political call. That is not the point of this post. But here is where my head went: if those AI tools end up deployed in a military operation, and something goes badly wrong, whether a wrong target is identified or an action is taken against the wrong person, the question that follows will not be “what did the AI decide?”

It will be “who deployed it, and what guardrails did they set?”

Why AI Accountability Stays With You

This is the principle most leaders skip past. AI can write it, generate it, and execute the recommendation. But if it goes out under your name, you own the outcome. Fully.

If you use Claude or ChatGPT to draft a report and it comes back with a hallucination, a false statistic, or a fabricated quote, and you publish it without reviewing it carefully, that is not an AI failure. That is a leadership failure. Your readers do not lose trust in the AI. They lose trust in you.

I wrote about this in Why Ethical AI Is Harder Than It Looks and in Don’t Outsource Your Thinking, Even to AI. The pattern is consistent: the more we hand tasks to AI, the more we assume accountability transfers with them. It never does.

This is not an argument against using AI. I use it constantly across my newsletter, my coaching work, and my consulting practice. Working smarter is genuinely how you grow. But owning the output is not optional.

The Disclosure Test

Here is the practical question I run before I use AI in any part of my work.

“Would I be comfortable telling my clients, my readers, or my team that AI was used in this specific part of the process?”

If yes, I proceed. If I feel any hesitation, if there is even a flicker of “I’d rather they not know,” that flicker is the answer. I don’t do it.

I call this the Disclosure Test, and it works because it forces honesty, not about the AI, but about you.

If you need to hide the fact that AI is used somewhere in your workflow, that need to hide is the answer.

Stop reading for a second. Think about your current AI workflows. Is there one where you’d be reluctant to tell the client? Name it. That’s where the work starts.

If you want help building your AI guardrails into your actual workflow, that’s exactly what the discovery call is for.

If you would hide AI use somewhere in your workflow, there is usually a reason. The output is not strong enough to stand on its own. The audience would push back. You have not done the review work required to truly own it. Any one of those is worth pausing for before anything goes out.

Anthropic was running a version of this same test at a much higher stakes level. They had a limit. They knew what it was. They held it, even when holding it cost them enormously. As I’ve written in AI Ethics DeepDive: Impossible Ideal or Achievable Reality, the organizations that navigate the AI era with their reputations intact are the ones that decided where their line was before someone else forced the decision.

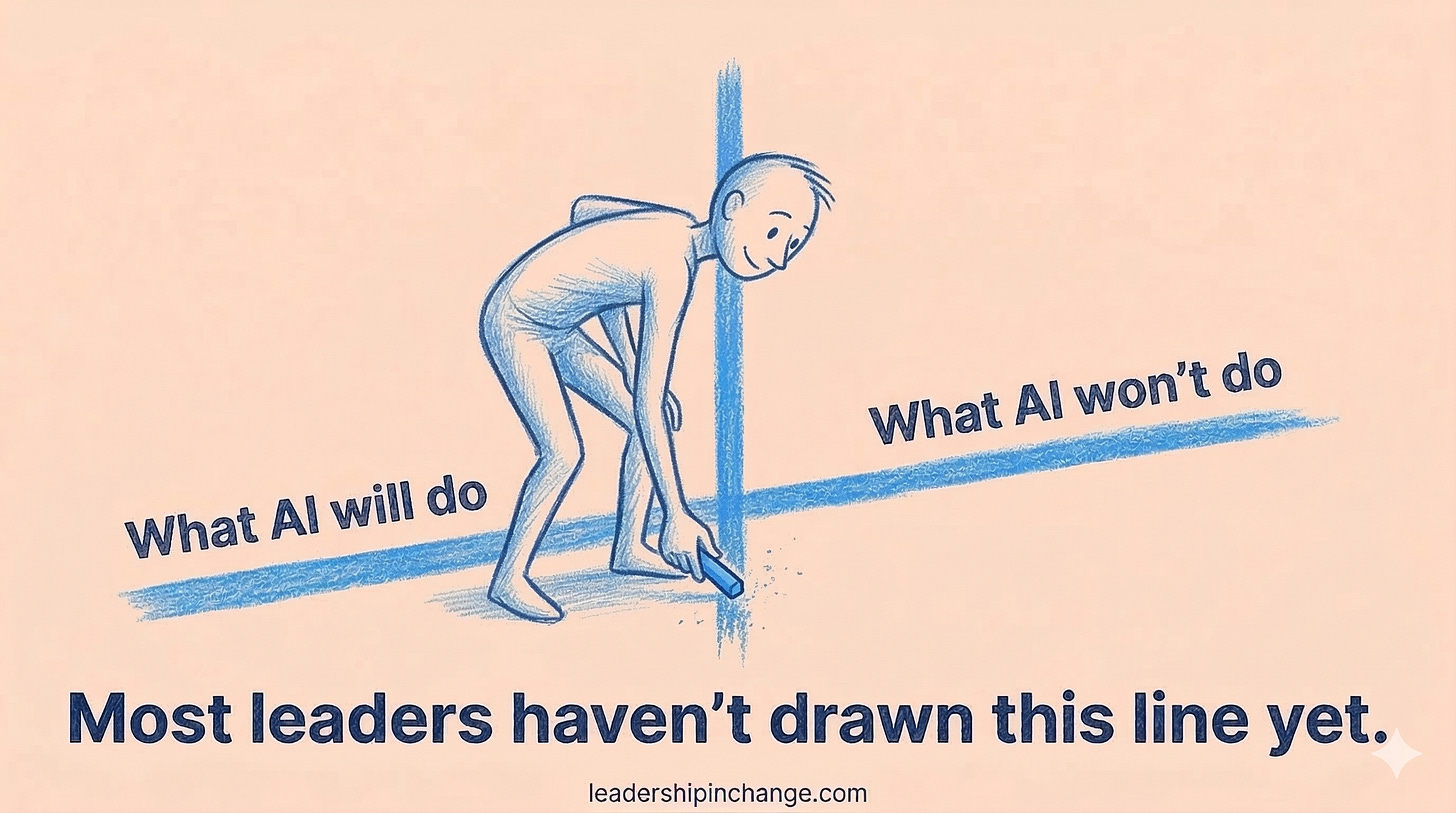

Set Your AI Guidelines Before the Pressure Arrives

Most leaders have not written their AI guidelines down. Most teams have no policy at all. And right now, while the stakes are still manageable and the pressure is relatively low, it is exactly the right time to set them.

What can AI do on your team without human review? What can it never do? What does transparency with your clients look like when AI is part of the process?

These are not HR questions. They are leadership questions. And the answers say more about your values than any mission statement on a wall.

The Anthropic story is useful precisely because it makes abstract principles concrete. A company drew a line. The line cost them something real. And they are now fully accountable for having drawn it. That is what integrity looks like under actual pressure.

You do not need a Pentagon contract to figure out where your line is. Figure it out, write it down, and hold it.

Because when something goes wrong with an AI output in your organization, nobody is going to ask the AI what happened.

They are going to ask you.

If You Only Remember This

AI does not absorb blame. Whatever goes out under your name, you own it, regardless of what generated it.

Run the Disclosure Test before every AI workflow: “Would I tell my clients AI was used here?” Hesitation is your answer.

Setting your AI guardrails is a leadership decision, not a compliance task. Do it now, before the pressure arrives.

What does your Disclosure Test reveal? Is there a part of your work where you already know AI doesn’t belong? Share it in the comments.

Questions Leaders Are Asking

What happened between Anthropic and the U.S. Department of Defense in March 2026? In early March 2026, the DOD asked Anthropic to allow Claude for mass surveillance of Americans and autonomous weapons targeting. Anthropic refused, citing its usage policies. The Pentagon labeled Anthropic a supply-chain risk, blacklisted the company from federal contracts, and Anthropic responded with a lawsuit.

Can an organization be held responsible for errors in AI-generated content? Yes. From a credibility standpoint, the organization or individual who published AI-generated content owns full responsibility for its accuracy. AI tools do not carry reputational or legal accountability. The leader who approved and distributed the content does.

What is the Disclosure Test for AI use? The Disclosure Test is the question: “Would I be comfortable telling my clients, readers, or team that AI was used in this specific part of the process?” If the answer is yes, proceed. If there is any hesitation, that hesitation is the answer. It is a practical guardrail for deciding where AI belongs and where it does not.

Should leaders avoid AI to protect themselves from accountability risk? No. The goal is not to avoid AI but to use it with clear ownership. AI improves your work significantly when used well. The key is reviewing outputs carefully, maintaining transparency with your audience, and never publishing AI-generated content you have not verified and would not stand behind.

What are AI guardrails and how do leaders set them? AI guardrails are the policies that define how AI can and cannot be used within your team or organization. They answer questions like: what decisions require human review, what can AI never do, and how to communicate AI use to clients. Start by writing your answers down. Most leaders have not done this yet.

Sources

NPR (February 27, 2026): Trump bans Anthropic, OpenAI announces Pentagon deal

Washington Post (February 27, 2026): Pentagon declares Anthropic a threat to national security

Axios (March 1, 2026): Claude beats ChatGPT in U.S. app downloads after Pentagon blacklists Anthropic

Fortune (March 2, 2026): Anthropic’s Claude overtakes ChatGPT in App Store as users boycott over OpenAI’s Pentagon contract

TechCrunch (March 6, 2026): Claude’s consumer growth surge continues after Pentagon deal debacle

TechCrunch (March 9, 2026): OpenAI and Google employees rush to Anthropic’s defense in DOD lawsuit

Love the disclosure test. I think a lot of people concerned about AI taking our jobs should be more worried about the more mundane risk of us just giving our jobs away to AI — for example, the job of reviewing, making decisions and taking responsibility, as you describe.

Accountability through disclosure: the exact principle 80,000 people echoed when asked what would make AI trustworthy in their lives. They didn't fear AI capability—they feared unaccounted-for power. Your point about "AI does not transfer accountability" is what people desperately need their leaders to understand. How does your organization apply the Disclosure Test? https://creatism.substack.com/p/the-81000-person-self-portrait