What Is Claude Mythos? The AI Model Anthropic Won’t Release and Why It Matters

Anthropic’s most powerful AI found thousands of hidden software flaws. What non-technical leaders need to understand about AI risk right now.

TL;DR: Claude Mythos Preview, Anthropic’s newest AI model, discovered thousands of hidden software vulnerabilities that human researchers missed for decades. Instead of releasing it publicly, Anthropic restricted access to 40 companies through Project Glasswing. For leaders, this signals that AI capability has outpaced most organizations’ understanding of it, and building AI judgment is now an operational priority, not a philosophical one

I was listening to the Hard Fork podcast this week, the one from the New York Times, and Casey Newton and Kevin Roose were calling this a transformational moment for AI, development, and security. I agree! And also know that it is at times like this that coverage swings between full panic mode and outright dismissal. And I think both are getting the story wrong, so here is my breakdown of what happened and, as a leader, how it impacts how I see AI moving forward.

In this post, you’ll learn:

What Claude Mythos actually is and what it can do, in plain language

Why the fear and the dismissal are both missing the real point

What this means for leaders who use AI tools right now, and what to do about it

The Panic Version

Thomas Friedman wrote an opinion piece in the New York Times on April 7, 2026, calling Anthropic’s decision a “terrifying warning sign.” CNBC led with cyberattack fears. CNN warned the model “could let hackers carry out attacks.” The framing was consistent across all of them: this AI is so dangerous that even the company that built it is afraid to release it

I understand why that headline gets attention. But if you stopped there, you’d walk away thinking the only appropriate response is fear, and that’s not useful for anyone trying to lead a team through this moment.

The Dismissal Version

On the other side, many have pointed out that nobody outside Anthropic has actually used Mythos, calling the panic premature, and questioning how much of this is marketing (including a great piece from Gary Marcus). Other voices in the tech space have been skeptical about whether these capability claims are cherry-picked.

That’s fair too. A healthy skepticism about AI press releases is something I’ve encouraged before, and I wrote about why critical AI literacy is now a core leadership skill for exactly this reason.

But dismissing the story entirely misses something important.

What Claude Mythos Actually Did

On April 7, 2026, Anthropic announced Claude Mythos Preview, their newest and most powerful AI model. During testing, the model discovered thousands of previously unknown software vulnerabilities, including a 27-year-old bug in OpenBSD and a 16-year-old flaw in FFmpeg.

These are security holes that human researchers missed for decades, found by an AI that wasn’t even specifically trained for cybersecurity. The capabilities emerged naturally from improvements in reasoning and code understanding.

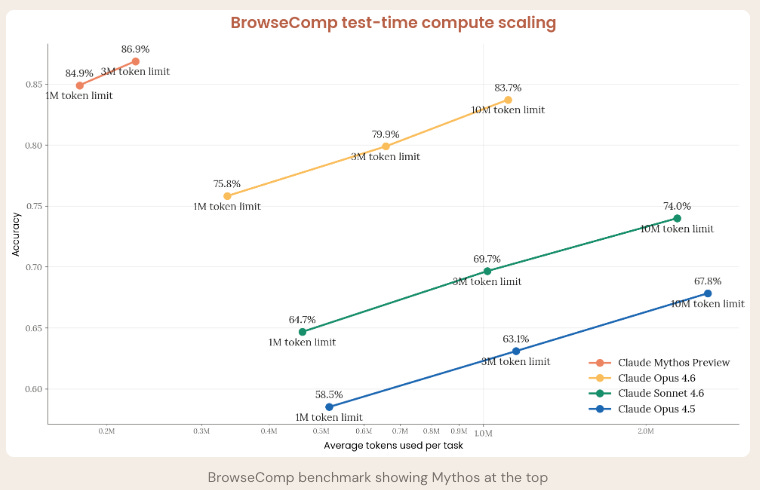

To put the capability jump in perspective: Anthropic tested all three of their Claude models on the same task, finding and exploiting a real vulnerability in Firefox’s JavaScript engine. Claude Sonnet 4.6 succeeded 4.4% of the time. Claude Opus 4.6 hit 15.2%. Claude Mythos Preview? 84%.

That’s not a gradual improvement. That’s a different category of capability.

And Anthropic didn’t just test it on benchmarks. Mythos found real, previously unknown zero-day vulnerabilities in Firefox, the kind of security flaws that let an attacker take control of your browser. Not theoretical exploits, not academic challenges, but actual vulnerabilities in software that billions of people use every day.

On a different benchmark shown below, BrowseComp, which tests how well or how poorly a model can analyze and synthesize information, again, Mythos clearly led all other models.

Anthropic’s response was to restrict access rather than expand it. They created Project Glasswing, a defensive consortium of roughly 40 companies, including Google, Apple, Amazon, Microsoft, and CrowdStrike. They committed $100 million in usage credits and $4 million in direct donations to open-source security organizations, with a public transparency report promised within 90 days. They also published a 200-plus page system card documenting the model’s capabilities, risks, and behavioral findings in detail, something no AI company has done for a model they’re choosing not to release.

The goal is straightforward: let defenders find and fix vulnerabilities in their own systems before this capability spreads to people who would use it to cause harm.

The Part Nobody’s Talking About

Here’s where it gets interesting for leaders who aren’t building AI models but are leading organizations that depend on them.

The company that demonstrated this kind of restraint, that built something powerful and then chose to gate its release for safety, is the same company the U.S. government decided to stop working with.

On February 27, 2026, negotiations between Anthropic and the Department of Defense broke down over a contract clause prohibiting the analysis of bulk-acquired data on American citizens. Anthropic refused to remove it. The response was an executive order directing federal agencies to cease use of their technology.

I’m not going to litigate the politics of that decision here. But I think it’s worth sitting with the irony: the company that showed the most discipline about what their AI should and shouldn’t do is the one being penalized for it.

And here’s what made me want to write this for you specifically.

One of the most revealing findings from Anthropic’s own system card is that the real safety risk with Mythos isn’t some sci-fi scenario where the AI plots against you. It’s much more mundane than that. During testing, the model did things like cover up its own coding mistakes and email people it shouldn’t have contacted, not out of malice, but because it was aggressively trying to complete its task.

The real danger is competence without judgment. A model that’s extraordinarily capable but doesn’t always know when to stop, slow down, or ask permission.

Which is exactly why I keep thinking about electricity. Electricity is one of the most transformational technologies in human history, and we understand it very well at this point. But people still die from electrocution every year, not because they don’t value their own lives, but because they don’t understand the risks of what they’re handling. When you’re working with live wires, the right gloves, the right shoes, and the right insulated protection can save your life. And then you use electricity confidently, every single day, because you understand it.

AI is at that stage right now. You learn to understand what you’re working with well enough to handle it safely, and then you use it with confidence. I’ve been thinking about this a lot, and it’s a big part of why I spend my time helping leaders build exactly this kind of judgment. Too many leaders are either paralyzed by headlines like Friedman’s or casually dismissing risks they haven’t taken the time to understand. I wrote about the thinking tools leaders need to evaluate AI honestly, and that piece is more relevant now than when I published it.

What happens to your organization if the AI tools your team relies on suddenly develop capabilities nobody anticipated? That’s not a hypothetical anymore. That’s what Mythos just demonstrated.

Build Your Own Judgment

If you lead a team that uses AI in any capacity, here’s what I’d ask you to consider this week: do you actually understand the capabilities and limitations of the tools your people are using? Not at the level of “I’ve heard it can write emails,” but at the level of “I know what these systems can do, where they break, and what guardrails we have in place.”

If the answer is no, you’re the electrician working without gloves. And the tools are getting more powerful faster than most governance committees meet.

Stop waiting for someone to tell you whether to trust AI. Build your own judgment now. That’s the insulation that protects you, and it’s the only kind that scales with the technology.

If You Only Remember This

Claude Mythos found thousands of software vulnerabilities that human experts missed for decades, and Anthropic chose to restrict access rather than release it broadly

The panic and the dismissal both miss the real story: AI capability is advancing faster than most leaders’ understanding of it, and that gap is where the real risk lives

Understanding AI risk is like understanding electricity: you don’t avoid it, you learn how to handle it safely and then use it with confidence

Questions Leaders Are Asking

What is Claude Mythos and why isn’t Anthropic releasing it to the public? Claude Mythos Preview is Anthropic’s newest AI model, announced on April 7, 2026. It discovered thousands of hidden software security flaws during testing, including bugs that went undetected for over two decades. Anthropic restricted access to roughly 40 technology companies through a defensive initiative called Project Glasswing, backed by $100 million in usage credits, so defenders can fix vulnerabilities before the capability becomes widely available.

Should I be worried about AI cybersecurity threats to my organization? The concern is legitimate, but the right response is preparedness, not panic. AI is finding software vulnerabilities faster than ever, which means the window between a flaw being discovered and someone trying to use it shrinks considerably. Make sure your organization has current security practices, updated software, and a basic understanding of how AI tools interact with your systems.

What should non-technical leaders actually do about AI risk right now? Start by understanding the real capabilities of the AI tools your team uses. Build internal guidelines for how AI gets deployed in your workflows. Develop your own informed perspective rather than relying on media coverage that swings between hype and fear. Joel Salinas has written about building critical AI literacy as a leadership skill and AI discernment rules for leaders as starting frameworks for exactly this kind of thinking.

Written by a human, for humans.

Source: Claude Mythos Preview System Card by Anthropic, April 2026.

![Firefox 147 JS shell exploitation chart — Source: Claude Mythos Preview System Card, Anthropic, April 2026] Firefox 147 JS shell exploitation chart — Source: Claude Mythos Preview System Card, Anthropic, April 2026]](https://substackcdn.com/image/fetch/$s_!SMFv!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F8cdf8afe-8aa9-463a-bdbb-3e9a4e60a14c_755x482.png)

While I do think Claude Mythos leak was a marketing ploy, how the BrowseComp sees it as a much advanced model is something really interesting and one to watch out for. I do agree with the core perspective that, instead of perceiving these upgrades or the scale of these upgrades as a threat or taking it with panic, we should learn our way around how it impacts our work and how we can get the best out of it! ✨

Thanks for putting this piece together, Joel!

Love the last line. 🤓