Stop Using AI Assistants — Build a 5-Role AI Team Instead

Why treating AI like a team instead of an assistant tripled one creator's output

TL;DR: AI content teams outperform single AI assistants when structured as five specialist roles: Researcher, Writer, Editor, Analyst, and Distributor. Briefing AI with context, examples, constraints, and output format produces dramatically better results than generic prompting. Quality gates at each stage prevent AI slop from shipping.

I keep getting more hours back.

Not because I’m working less. Because every month, I add another specialized AI agent to my workflow, and each one gives me something I can’t manufacture: time to think. Time for strategy. Time for the creative work that actually moves the needle.

Last month, I was scrolling through content from creators I follow, and I noticed something about Dheeraj Sharma, one of my favorite new creators on Substack. This guy runs YouTube channels, a travel blog, an active LinkedIn presence, AND a growing AI automation newsletter called GenAI Unplugged. No team. No employees. Full-time job on top of all of it.

I had to know his secret.

So I asked him. And what he described was a mindset shift that I think every leader reading this needs to hear. Dheeraj stopped thinking about AI as an assistant and started thinking about it as a team. Five specialist roles, each with a clear job, clear expectations, and clear quality gates.

The framework he lays out below is one of the most practical content systems I’ve seen, and I’m grateful he’s sharing it with each of you.

Dheeraj is also one of the amazing creators in Cozora.org, the live AI learning community I co-founded recently. If you want to learn AI live from creators like Dheeraj (and Leadership in Change premium subscribers get 50% off), I’d encourage you to learn more.

Building AI teams like this is one of the first things I help leaders set up in coaching sessions, most are still stuck in the "one assistant" mindset and don't realize what's possible.

In this post, you’ll learn:

Why the “AI assistant” mindset limits your output (and what to replace it with)

The 5 specialist AI roles that handle research, writing, editing, analysis, and distribution

How to brief AI like a manager, not a user

The quality gate system that prevents AI slop from shipping

How to multiply one piece of content into 6+ platform-specific assets

What to start Monday morning with one role and a proper brief

Now, over to Dheeraj Sharma.

How I Run a 5-Person Content Team (With Zero Hires)

I manage two YouTube channels, a travel blog and now a growing AI automation focused newsletter. I don’t have any employees. I have a full-time job.

But I have a 5-person content team that handles most parts of my research, writing, editing, analysis, and distribution.

They’ve never missed a deadline. They are available 24/7. And they cost less than one junior hire.

Here’s the mindset shift that made it possible.

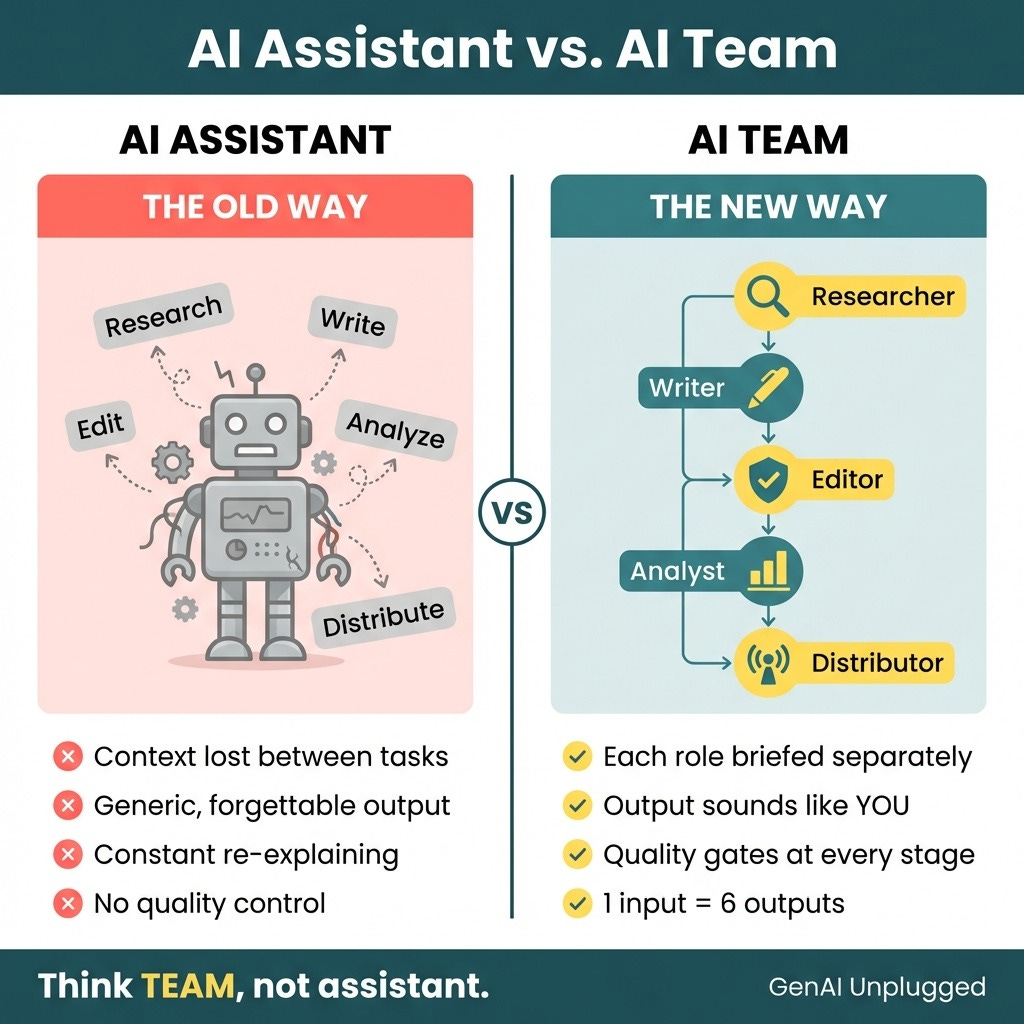

The Problem with “AI Assistant” Thinking

Many people use AI as one generalist assistant. They open ChatGPT or Claude, ask it to research something, then ask it to write something, then ask it to edit something. All in the same conversation.

The result? Context loss. Generic output. Constant re-explaining.

Sound familiar?

Well, here’s what I realized:

You don’t hire “a person” at your company. You hire specialists. Your accountant doesn’t write your marketing copy. Your marketing team doesn’t handle your legal contracts. Different roles require different expertise.

Why would AI be any different?

The shift that changed everything for me: Stop thinking “AI assistant.” Start thinking “AI team.”

When I made this mental switch, my content output tripled. Not because I worked harder but because I finally started treating AI like specialist roles of my team instead of a giant magic box.

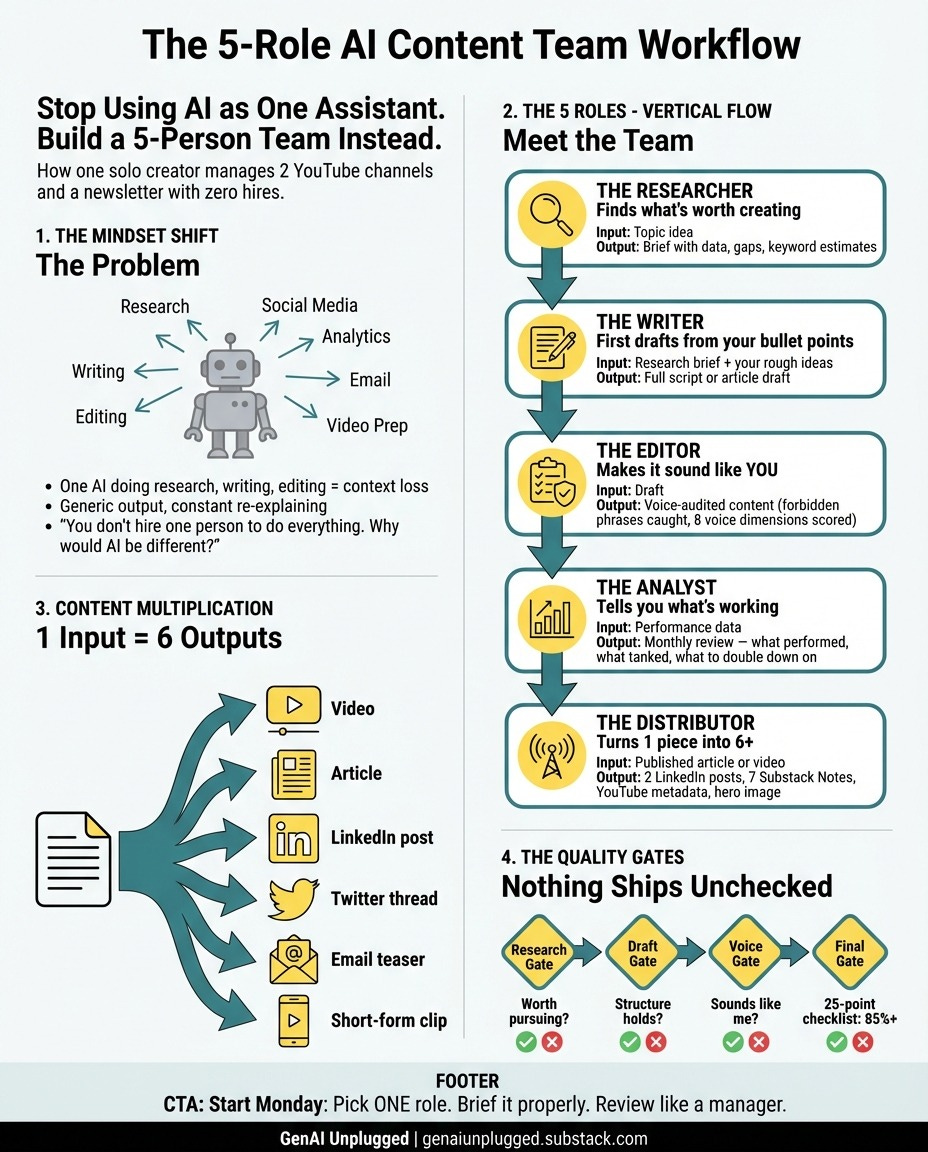

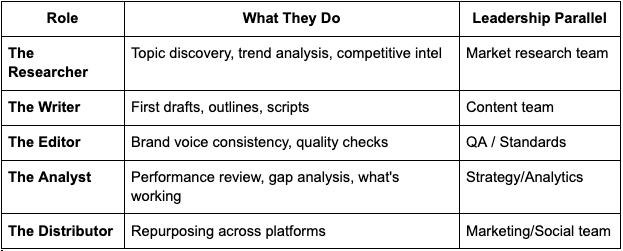

Meet My 5-Person AI Team

Let me introduce you to my team. Each role has a specific job, a clear scope, and gets briefed differently.

1. The Researcher → Finds what’s worth creating

Before I commit hours to a video or article, Researcher scans trends, analyzes what competitors are publishing, and identifies gaps. They answer one question: “Is this worth my time?”

What I get back:

A brief with 10-12 key findings,

5+ competitor articles analyzed,

actual keyword difficulty estimates, and

“People Also Ask” questions I should address.

Real data from real search results rather AI guessing what might be popular.

An example from my YouTube use case:

I wanted to make a video about “Claude Code agents.” Before scripting, Researcher told me the search volume, what formats were ranking (tutorials beat opinion pieces), and that the average top result was 2,800 words. That intel shaped the entire video script. I knew exactly how deep to go and what angle to take.

Why it’s a separate role?

Research requires breadth i.e. scanning lots of information quickly. Writing requires depth i.e. going narrow and detailed. Different mental modes.

When you ask one AI to “research this and then write about it,” you get shallow research and generic writing. So, I separated the roles.

2. The Writer → Outputs first drafts

Writer takes my bullet points, rough ideas, spoken notes, or video concepts and turns them into full scripts, articles, or outlines.

An example from my YouTube use case:

For some of my YouTube video, I record a voice memo some times while walking or driving or sitting on passenger seat. I narrate some my main points for a video in my Notion database. The Writer Agent transforms it into a complete script I can record from. I go from scattered thoughts to shoot-ready in an hour.

Why it’s a separate role?

Writers write. They don’t research. They execute a brief. When I stopped asking one AI to “research and then write,” quality went up immediately.

Video:

3. The Editor → Makes it sound like me

This is the role most people skip and it shows. Editor reviews everything for brand voice consistency, catches awkward phrasing, and ensures my content sounds like I wrote it, not like a robot wrote it. It is trained on my 10 year old articles (500+ there on my travel blog but you only need a few)

I’ve trained Editor on a “forbidden phrases” list words that instantly make content sound like AI slop. Things like “revolutionary,” “game-changing,” “unlock your potential,” or fear-based phrases like “you’ll be left behind.” Editor catches these and flags them.

They also check 8 specific voice dimensions: Am I being clear over clever? Am I using concrete examples? Am I talking like a peer, not a guru?

I wrote a detailed guide on exactly how you can do it: How to train your AI with brand voice.

An example from my Substack use case:

Every article gets scored across these dimensions before publish. Editor auto-fixes some things like passive voice gets rewritten, long paragraphs get split. But brand violations get flagged for my review. I make the final call. This is why readers tell me my content doesn’t “feel like AI” because Editor exists and has standards. And above all I keep closing the learning loop back by feeding where it did not do well.

Why it’s a separate role?

Writing and editing are different skills. Even the best human writers need editors. Stephen King has an editor. Your AI content needs one too.

4. The Analyst → Tells me what’s working

Analyst reviews performance data across platforms, spots patterns in what’s resonating, and identifies gaps in my content strategy.

An example from my YouTube use case:

Every month, Analyst reviews my videos analytics and tells me which topics and formats performed, which tanked, and what I should double down on. Honest feedback with no ego attached.

Why it’s a separate role?

Creators are too close to their own work. We fall in love with ideas that don’t perform. Analyst gives me the outside perspective I need.

5. The Distributor → Turns one piece into many.

This is where the real leverage happens. Distributor takes a single piece of content and repurposes it across platforms but not by copying. Each piece is adapted for its platform.

For LinkedIn, I get two variants: one data-driven (”That manual follow-up is costing you $47 per lead”), one narrative-driven (story format).

For Substack Notes, I get 7 different variations using different hooks, some contrarian, some relatable struggles, some framework-focused.

Each uses a different template so they don’t all sound the same.

An example from my Substack use case:

One article about Claude Projects became 2 LinkedIn posts, 7 Substack Notes, YouTube metadata, and a hero image concept. From one piece of content, I had two weeks of social posts and each optimized for its platform, not just copied and pasted.

Of course, the entire Draft → Review → Publish cycle exist for all of them too with me as Human-in-the-loop.

Why it’s a separate role?

Creation and distribution are different muscles. When I tried to do both in my head, I’d create but never distribute. Now distribution happens automatically.

Video:

Content Multiplication: The Real ROI

Most creators think linearly: 1 input creates 1 output.

I think in multiplication: 1 input creates 6 outputs.

Here’s what that looks like in practice:

Starting with a YouTube video:

The video itself (obviously)

A full article adapted from the transcript (different format, same ideas)

A LinkedIn post highlighting the key insight (professional audience)

A Substack notes set breaking down the main points (bite-sized format)

A newsletter section with the core takeaway (email subscribers)

Short-form clip suggestions for YouTube Shorts or Reels (discovery format)

Starting with an article:

The article itself

A video script adapted for talking to camera

Social posts pulling the best quotes

An email teaser driving traffic back to the full piece

The content isn’t just copied rather it’s adapted. LinkedIn sounds different than Twitter or Substack. A video script has different energy than a written article. Distributor understands this. Each piece is optimized for its platform while keeping the core message intact.

This isn’t about working harder. It’s about building a team that multiplies your output without multiplying your hours.

Leaders understand leverage. This is leverage applied to content.

My results with this system

2 YouTube channels managed without burning out. My travel channel has 59000 subscribers. The GenAI Unplugged one has started getting the deserving traction with 1500+ subs and getting approved for monetization (We all wait for it 😀)

Weekly Substack that ships on schedule (25+ articles and counting)

Active social presence across platforms from single recording sessions

Each article generates 10+ distribution assets automatically

Time spent per piece of content dropped from hours of manual work to minutes of review

Managing AI Like Employees

Having 5 AI “team members” means nothing if you manage them poorly. Here’s how I actually work with my AI team.

1. Brief Like You’d Brief a New Hire

When you hire someone new, you don’t say “do marketing stuff.” You give them context, examples, and clear expectations.

Same with AI. Every brief I give includes:

Context: What’s the project? What’s the goal?

Examples: What does “good” look like? (I show past work I liked)

Constraints: What should they avoid?

Output format: What exactly do I need back?

“Write me something about productivity” gets you generic content.

“Write a 1,500-word article about why most productivity systems fail for solopreneurs, in the style of my previous article [example], avoiding generic tips like ‘wake up early’” gets you something useful.

The quality of your output is directly proportional to the quality of your brief.

2. Review Like a Manager, Not a User

Most people accept or reject AI output. That’s not management rather that’s using a vending machine.

Real management means:

Giving specific feedback: “This section missed the mark because...”

Explaining the gap between what you got and what you needed

Iterating with direction, not frustration

When Writer gives me a draft that’s off, I don’t start over. I tell them exactly what to fix:

“The opening is too generic. Start with the specific problem our audience faces. Reference the stat I mentioned in the brief.”

This is how you build an AI team that improves over time.

Build Institutional Knowledge

Here’s what most people miss:

Over time, your AI team learns your preferences.

I’ve been working with my Editor for months. At this point, they know my voice, my pet peeves, and my style. I brief less. Quality improves. It’s like working with employees who actually know you.

The key insight: The people getting generic AI output are treating AI like a vending machine. Put in prompt, get output. The people getting great output are treating AI like employees. Brief, review, iterate, improve.

Quality Gates: Nothing Ships Unchecked

I don’t trust any single team member to ship alone. Even my best human employees needed review processes. Why would AI be different?

Everything goes through checkpoints:

Research Gate: Is this topic worth pursuing? Does the data support it?

Draft Gate: Does the structure hold? Is the “big idea” clear?

Voice Gate: Any forbidden phrases? Does it score well on voice dimensions?

Final Gate: 25-point checklist. Does it pass at 85% or higher?

At each gate, I make a decision. Pass it through. Send it back with specific feedback. Or kill it entirely.

My final quality gate runs a 25-point checklist: Is the first sentence under 10 words? Are paragraphs under 4 lines? Are headers descriptive, not vague? etc.. The checklist is strict but it’s why my content consistently hits a quality bar.

Remember: There is no shortcut. You have to work harder in the initial days to train them as if you train the humans when establishing the SOPs with them. But it pays overtime.

Most AI content fails because there’s no QA. People generate and publish in one step. No review. No iteration. No quality control. That’s how you get AI slop with your name attached to it and why so much AI-generated content sounds identical.

My system protects my reputation. AI executes. I decide. Human judgment at every gate.

Start Monday Morning

The tools are available to everyone. Perplexity, Claude, ChatGPT - all accessible. What separates leaders who get results from leaders who get frustrated is how they THINK about these tools.

Here’s what to do Monday morning:

Pick one role to start - I recommend Researcher or Editor

Brief them properly - Context, examples, constraints, output format

Review like a manager - Specific feedback, not just accept/reject

Add roles as you build trust - Don’t try to build a 5-person team on day one

Stop thinking assistant. Start thinking team.

Key Takeaways

Think “AI team,” not “AI assistant” - Different roles, different strengths, different briefings

Multiply, don’t add - 1 piece of content should become 6

Brief like a manager - Context, examples, constraints, output format

Review like a manager - Specific feedback, iterate with direction

Build quality gates - Nothing ships without human checkpoints

This is the mindset. The system behind it, the exact prompts, workflows, and templates is what I’m documenting on GenAI Unplugged.

My 5-Role AI Team Framework

Thank you, Dheeraj Sharma!

This is Joel again. Now, this is a win if You Only Remember This:

Stop thinking “AI assistant” and start thinking “AI team.” Different roles need different expertise, different briefings, and different expectations.

The quality of your AI output is directly proportional to the quality of your brief. Context, examples, constraints, and output format make the difference between generic and useful.

Start with one role (Researcher or Editor), brief them properly, review like a manager, and add roles as you build trust.

What role would you build first for your content or leadership work: Researcher, Writer, Editor, Analyst, or Distributor? And why?

PS: Many subscribers get their Premium membership reimbursed through their company’s professional development $. Use this template to request yours.

Thank you so much Joel for giving me this opportunity to share my experiences and learning building these Agents that simplify my written and video content pipelines.

What a great read this one! Initially, even I was surprised, to understand the bandwidth of Dheeraj's content creation given he has a FT job. But then when we spoke for the first time, he outlined how smartly runs it with a system in place. So much to learn! 🚀