Why Most AI Training Fails, Here's How to Fix It

Stop Training Resistance Into Compliance. Start Testing for Compatibility & FREE Assessment

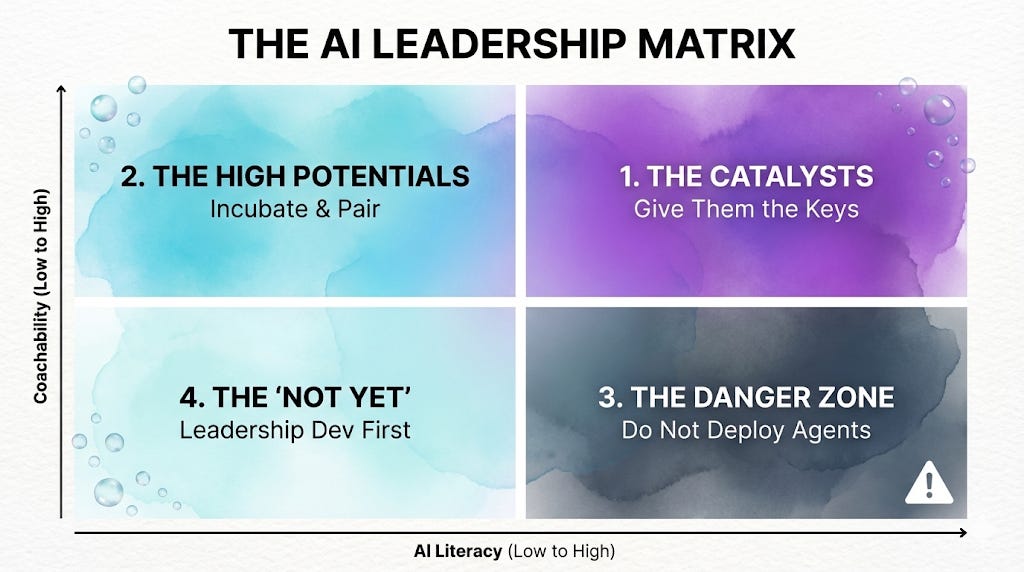

TL;DR: AI adoption failure is typically a compatibility problem, not a training problem. The AI Leadership Matrix segments leaders by literacy and coachability. A three-step diagnostic (Fail-Safe Simulation, Feedback Audit, Governance Test) identifies which leaders will sabotage AI pilots before deployment, preventing "performative adoption" where leaders complete certifications but quietly ensure nothing changes.

People know how to ride in a self-driving car.

That doesn’t mean they’ll trust it enough to get in one when they have the chance.

Knowing is different from trusting. And only one of the two actually leads to action.

This is exactly why most AI training programs produce impressive certificates and zero adoption. Organizations spend six figures teaching leaders how to use AI agents while completely missing whether those leaders will actually trust them enough to deploy.

Here’s what’s showing up in real time: leaders complete every workshop, ace every certification, and then quietly route AI outputs through manual approval. They run AI in parallel with old workflows “just to verify.” They champion pilots publicly while ensuring teams know the “real work” happens the old way.

The bottleneck isn’t knowledge. It’s trust. And trust isn’t built through better prompting techniques.

When you’ve spent years building expertise as “the person who knows,” an AI agent that does your work in seconds doesn’t feel like a productivity tool. It feels like proof you’re becoming obsolete. No certification fixes that.

I asked Anna to write this post because of her expertise and experience.

Anna Levitt is the founder of Bubble Boss Co, where she helps organizations diagnose which leaders are ready to manage AI agents and which ones will quietly sabotage them, no matter how much training you provide. A CPCC-certified coach with 20 years in People and Culture, including scaling a company from a startup to around 1,000 employees, she developed a diagnostic eye for the gap between what leaders say they want and what their behavior actually protects. She writes about the human side of AI transformation at How to Boss AI on Substack. What she’s discovered through two decades of watching leadership patterns: most AI adoption failures aren’t technical failures, they’re compatibility failures.

In this guest post, Anna reveals:

Why “performative adoption” happens when you train incompatible leaders

The AI Leadership Matrix that segments leaders by readiness, not just skill

A 3-step diagnostic to identify who will sabotage your pilot before it launches

How to shift from managing people to managing hybrid teams of humans and agents

The difference between utility doubt (solvable with training) and identity threat (not solvable with training)

✨ AND don’t miss our free AI Readiness Assessment below! ✨

If you’ve ever wondered why your best-trained leaders still resist AI adoption, this framework will change how you think about deployment.

NewsletterCompass.com is here! Every hour you spend on newsletter busywork is an hour not creating. Newsletter Compass fixes that. Use code WELCOME for 50% off during launch.

Take it away, Anna Levitt.

From Coaching to Compatibility: Knowing When “AI Resistance” Is a Signal, Not a Skill Gap

Most organizations treat AI adoption as a training problem. Or an org structure issue. Or data scattered across functions. There are more layers to what goes wrong with AI adoption than most people want to admit. Today, I want to focus on one: coaching and compatibility in leaders.

Here’s what’s showing up right now: organizations have access to powerful new tools (Agents, LLMs), but managers aren’t using them. The default response is to push them toward training on prompting, use cases, and technical literacy. More workshops, more certifications.

After running diagnostics on leadership teams preparing for AI transformation, I’ve found that technical literacy is rarely the bottleneck. The pattern I see repeatedly: leaders who complete every certification and workshop but quietly sabotage the tools in practice. They become review bottlenecks, routing AI outputs through manual approval. They run AI in parallel with old workflows “just to verify,” never trusting enough to replace their process. They champion pilots publicly while ensuring teams know the “real work” happens the old way.

The problem isn’t technical skill. It’s identity protection.

These leaders can’t let go of being “the person who knows.” The expert. The one who handles it. And honestly? That makes sense. When you’ve spent years building expertise, an AI agent that does your work in seconds doesn’t feel like a tool. It feels like a threat.

No amount of prompt engineering training fixes that; it often makes it worse.

Training a manager who fundamentally resists the concept of shared agency doesn’t create adoption. It creates “Performative Adoption,” where leaders go through the motions of using the tools while making sure nothing actually changes about how work gets done.

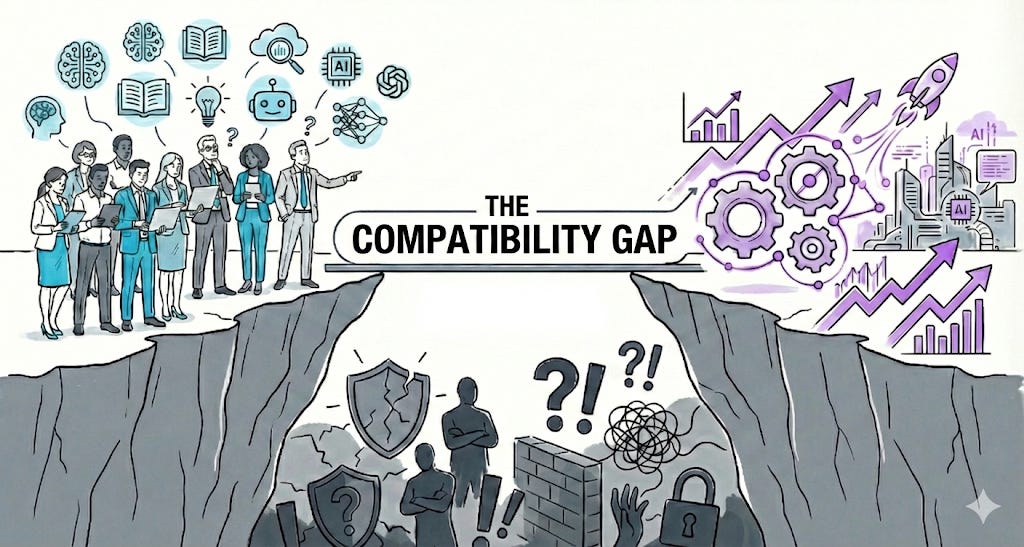

Across the mid-market organizations I’ve worked with, the real bottleneck isn’t a Skill Gap. It’s a Compatibility Gap.

Too many organizations are trying to install autonomous agents into leadership structures that are fundamentally incompatible with them. If you want AI pilots to succeed, the question isn’t “Does this leader know how to use AI?” The question is “Is this leader compatible with AI?”

The Silent Pilot Killer: Identity vs. Utility

When a leader resists an AI agent, it usually comes from one of two places:

Utility Doubt: “I don’t think this tool is useful or accurate enough.” This is solvable with better data, better training, better use cases.

Identity Threat: “If this agent does X, what is my value?” This is not solvable with training.

And here’s the thing: the fear underneath the Identity Threat is rational. When someone spent years building expertise as “the person who knows,” an AI agent that replicates that work in seconds isn’t a productivity boost. It’s an existential question. The issue isn’t that these leaders are resistant to change. It’s that this particular change threatens the foundation of their professional identity.

Traditional change management treats everyone as if they’re in the Utility Doubt camp. Flood them with workshops. Update the process docs. But for a leader facing Identity Threat, an AI agent isn’t a tool to be learned. It’s a rival to be managed.

If you hand a powerful, semi-autonomous agent to a defensive leader with low psychological safety, they will sabotage the pilot. Sometimes consciously, often not. But it will happen.

Before deploying agents, you need to diagnose who is actually ready to manage them.

The Framework: The AI Leadership Matrix

To determine readiness, I assess leaders on two axes:

AI Literacy: Do they understand the basics of probability, risk, and agentic workflows?

Coachability (The “Human OS”): Do they seek feedback? Do they own their mistakes? Can they learn in public without getting defensive?

This creates four distinct profiles:

1. The Catalysts (High Coachability / High Literacy)

These are your Catalysts. They understand the tech and they have the emotional security to manage a non-human agent without feeling threatened by it.

Strategy: Give them the keys. Let them run the high-stakes pilots. They’ll set the governance standards for the rest of the organization.

2. The High Potentials (High Coachability / Low Literacy)

This is your High Potential group. They may not know what an “agent” really is yet. But they’re curious, open to feedback, and not threatened by change. That combination is more valuable than technical fluency.

Strategy: Pair them with technical leads in low-stakes sandbox environments. Their curiosity will do the rest.

3. The Danger Zone (Low Coachability / High Literacy)

This is often the most dangerous quadrant. These leaders are technically sharp. They might be your best engineers or data scientists. But they’re also defensive, hoard power, or resist transparency.

Strategy: Avoid deploying autonomous agents here until leadership dynamics shift. This is where pilot theater happens: impressive demos, zero adoption. A highly capable but uncoachable leader can use AI to amplify their own biases or build opaque systems that only they understand.

I want to be clear: this isn’t about labeling people as “bad.” It’s about recognizing that some leaders need different support before they’re ready to manage AI. Pushing agents onto someone who isn’t ready doesn’t help them. It sets them up to fail.

4. The “Not Yet” (Low / Low)

Strategy: These leaders need fundamental leadership development (psychological safety, feedback culture) before they touch AI. In practice, this often means removing AI adoption from their KPIs entirely so they don’t fake readiness to meet metrics.

This isn’t a permanent label. It’s a current state. People move between quadrants. The goal is to meet them where they are, not where you wish they were.

We’ve created an interactive AI Readiness Assessment based on Anna’s framework. In 5 minutes, you’ll discover where you fall in the AI Leadership Matrix (Catalyst, High Potential, Danger Zone, or Not Yet) and get specific next steps for your situation. It’s FREE, check it out!

What Happens When You Skip the Diagnostic

The cost of deploying agents to incompatible leaders isn’t just failed pilots. It compounds:

Pilot Theater: Leaders run impressive demos while teams quietly revert to old tools. Adoption metrics look good on slides while actual usage flatlines.

Lost Trust: When a Danger Zone leader builds an opaque AI system that fails, the entire organization becomes skeptical of AI. One bad experience can poison the well for everyone.

Talent Attrition: Your best people leave when they see AI being used to consolidate power rather than distribute capability. High performers don’t stick around to watch defensive leaders hoard information.

Wasted Training Investment: You’ve spent six figures on training that taught people how to use AI, when the real blocker was whether they were willing to share agency with a non-human system.

Organizations that skip the compatibility diagnostic typically spend significantly more on change management after the pilot fails than they would have spent diagnosing readiness upfront.

The Diagnostic: Run a “Readiness Clinic”

How do you know who is who? Not by launching a pilot and hoping for the best. By running a Readiness Clinic before deployment.

Here’s a 3-step diagnostic to segment your leaders:

Step 1: The “Fail-Safe” Simulation

Give your leadership cohort a low-stakes problem to solve using a basic AI tool. But set it up so the AI will make a mistake (a hallucination or a logic error). Then watch the reaction.

Compatible Leader: “Interesting, it got that wrong. I wonder why? Let’s adjust the prompt.” (Curiosity)

Incompatible Leader: “See? I told you this thing is useless. It’s dangerous.” (Validation of existing bias)

The difference isn’t intelligence. It’s orientation. One leader sees a puzzle. The other sees proof they were right to be skeptical.

Step 2: The Feedback Audit

Look at the leader’s last 6 months of performance reviews or 360-degree feedback. Ignore the “Technical Skills” section entirely. Look only at: reaction to feedback, vulnerability, and transparency.

Here’s the principle: if a leader cannot accept coaching from a human peer, they are unlikely to trust output from an AI agent. If they view human feedback as an attack on their status, they will view AI correction as a “glitch” to be overridden.

Step 3: The Governance Test

Ask the leader to draft “Rules of Engagement” for their proposed agent.

Compatible Leader: Focuses on guardrails, human review, and ethical checks. “How do we ensure it doesn’t mess up?”

Incompatible Leader: Focuses on speed and removal of oversight. “How can this do my work for me so I don’t have to look at it?”

The first response shows someone who understands accountability. The second shows someone looking to offload responsibility.

Moving from Managing People to Managing Hybrid Teams

The transition to agentic workflows is not a tech upgrade. It’s a management shift. Organizations are asking managers to oversee a hybrid workforce of humans and synthetic agents. That’s a fundamentally different job than what most managers signed up for.

The core requirement for this new era isn’t prompt engineering. It’s Governance.

A compatible leader understands that they’re responsible for the agent’s output, just as they’re responsible for a junior employee’s output. They treat the agent as a team member who needs coaching, monitoring, and course-correction. Not a magic box that removes their accountability.

If you want AI transformation to stick, stop trying to “train” resistance out of people. Resistance isn’t always a skill gap. Sometimes it’s a signal that someone needs different support, or that they’re not ready yet.

Instead: identify your Catalysts and High Potentials. Empower them to create the initial wins. Let culture follow results, not the other way around. Check the Human OS first.

Thank you, Anna Levitt!

Here’s what I’ve learned working with Fortune 500 execs, nonprofit directors, and church leaders: compatibility matters more than capability. A leader with high coachability and low technical skill will adopt AI faster than a technically brilliant leader who views feedback as a threat.

Before you deploy your next AI pilot, run Anna’s three-step diagnostic:

The Fail-Safe Simulation - Do they get curious or defensive when AI makes a mistake?

The Feedback Audit - How do they actually respond to coaching from humans?

The Governance Test - Do they focus on guardrails or removing oversight?

The answers reveal adoption readiness better than any certification.

I’ve watched organizations waste six figures on training programs while ignoring whether leaders could psychologically handle sharing agency with a non-human system. The result? Performative adoption. Impressive demos with zero implementation.

Anna’s diagnostic flips this. Instead of training resistance into compliance, it identifies compatibility first. Then it meets leaders where they are.

IF YOU ONLY REMEMBER THIS:

Most AI adoption failures aren’t technical failures - they’re compatibility failures between leaders and the agents they’re supposed to manage

Run the 3-step diagnostic before deployment: Fail-Safe Simulation, Feedback Audit, and Governance Test reveal readiness better than any skills assessment

“If a leader cannot accept coaching from a human peer, they are unlikely to trust output from an AI agent” - Anna Levitt

One question for you: Have you seen leaders who completed all the AI training but still find ways to avoid actually using the tools? What pattern are you noticing?

Join 3,700+ Leaders Implementing AI today…

Which Sounds Like You?

“I need systems, not just ideas” → Join Premium (Starting at $39/yr, $1,345+ value): Tested prompts, frameworks, direct coaching access. Start here

“I need this built for my context” → AI coaching, custom Second Brain setup, strategy audits. Message me or book a free call

PS: Many subscribers get their Premium membership reimbursed through their company’s professional development $. Use this template to request yours.

Compatibility-first is such a sharp lens here. The distinction between utility doubt and identity threat perfectly explains why “more training” often just produces performative adoption and pilot theater instead of real behavior change.

Instead of competing with technology, professionals should use it to become even more efficient