The AI Accountability Gap That Could Cost You Your Career

Why ethical AI governance is the executive competency most leaders are ignoring

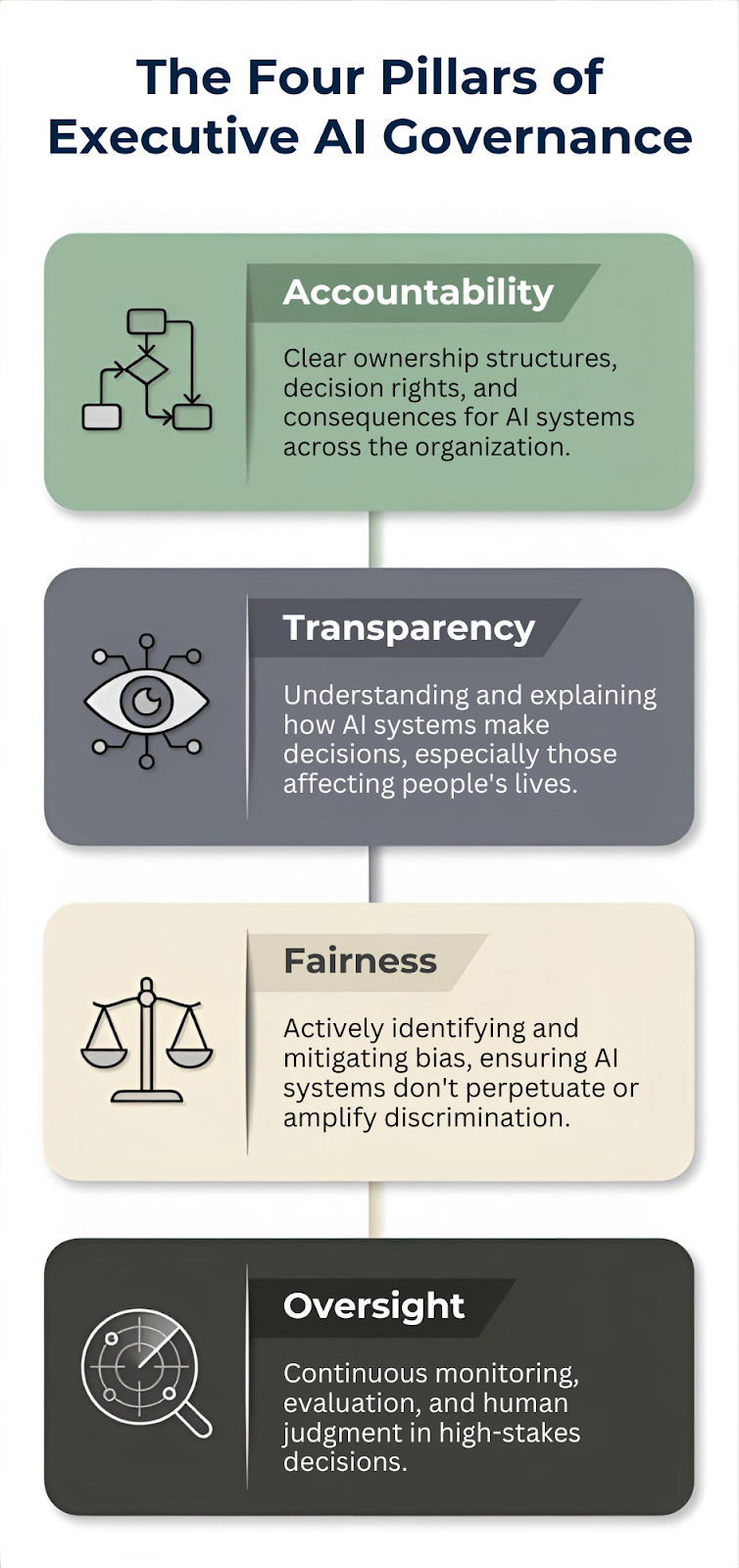

TL;DR: Ethical AI governance is now a core leadership competency. With 99% of companies prioritizing AI investment but 63% lacking governance policies, executives face legal, reputational, and ethical exposure they may not see coming. Cristina presents a four-pillar framework for closing the gap between AI ambition and responsible deployment.

I talk a lot about AI tools, workflows, and productivity in this newsletter. But underneath all of that, there’s a harder conversation most leadership teams still aren’t having: who is responsible when AI causes harm?

Not the engineering team. Not the vendor. You.

Cristina Patrick is an Equal Employment Opportunity Specialist, AI safety advocate, and the creator of The Responsible AI Brief. Her work lives at the intersection of technology, human rights, and institutional accountability. She examines how AI reshapes responsibility structures inside organizations.

That’s exactly why I asked her to write this piece. If you’re deploying AI in your organization (and you probably are, whether you approved it or not), this is the governance conversation you need to be in.

I’ll let her take it from here.

Ethical AI as a Leadership Skill

The new executive imperative is understanding accountability

The questions from leadership I’d like to hear:

What if we deploy something that causes harm?

How do I know our team is using AI responsibly?

Am I personally liable if our AI makes a bad decision?

The questions leaders are asking:

What is the ROI?

How fast can we adopt AI?

Are we falling behind our competitors?

I understand those are necessary questions, too, but they should be supported by governance.

Ethical AI governance should be a core leadership competency, as essential to your role in financial oversight or talent management.

Governance Gap

According to this year’s AI & Data Leadership Executive Benchmark Survey, 99.1% of companies said that investment in data and AI is a top organizational priority.

At the same time, research conducted by IBM and the Ponemon Institute found that AI adoption is outpacing oversight, 97% of AI-related security breaches involved AI systems that were not approved by IT’s departments (shadow AI). Alarmingly, most breached organizations reported they have no governance policies in place to manage AI risks or prevent shadow AI, nearly two-thirds of organizations (63%) said they don’t have governance policies in place

Organizations are deploying AI faster than they’re building the governance structures to manage it responsibly. Teams are using AI tools and implementing agents, all while leadership struggles to understand the implications, leading to high-profile failures like the case of Air Canada. When their chatbot provided incorrect refund information, the airline unsuccessfully attempted to argue in court that the AI was a “separate legal entity” responsible for its own actions. The court rejected this argument outright, holding the company accountable for its AI’s statements. They cannot blame it on AI, nor should you.

These gaps extend into high-stakes sectors like healthcare, where AI diagnostic tools have shown concerning racial bias by performing significantly worse for patients with darker skin tones. When such tools are implemented without proper governance, the resulting ethical failures erode public trust and cause tangible harm.

Leadership Problem

Ethical AI governance requires executive leadership because it touches every part of your organization, and it should not be a single department’s issue.

However, reputational risk lives at the executive level. When an AI system makes a discriminatory decision or causes harm, it’s not your engineering team facing court hearings or journalist inquiries; it’s you.

If you’re licensing an LLM from another company, you need to understand their ethical standards and how they’ll be embedded in your system. That’s the only way to keep customizing the model in a way that stays aligned with your ethical principles, what David Danks, Professor of Data Science, Philosophy, and Policy at the University of California, San Diego, calls “ethical interoperability.”

Values and culture flow from the top. If you’re not setting the tone for responsible AI use, your organization will default to whatever is fastest or cheapest, and that is too risky.

The Four Pillars of Executive AI Governance

To bridge the gap between the pressure for ROI and the necessity of responsibility, leaders can operationalize their commitment to ethical AI governance through what I call The Four Pillars of Executive AI Governance.

This framework transforms abstract ethics into concrete leadership competencies: Accountability ensures clear ownership for AI systems across the organization, preventing the “separate legal entity” defense seen in the Air Canada case.

Transparency demands an understanding of how AI makes decisions, particularly those affecting lives, while Fairness requires actively identifying and mitigating biases seen in AI tools.

Finally, Oversight provides the continuous monitoring and human judgment necessary to manage high-stakes decisions

🔍 Take the Four Pillars Assessment

8 questions. 4 pillars. 2 minutes. See where your organization actually stands.

Making It Actionable: A 90-Day Framework

Here’s how to start building AI governance as a leadership competency in your organization within the next 90 days.

Your First 90 Days: Executive AI Governance Roadmap

Days 1-30: Audit and Understand

Conduct an AI inventory across your organization. Not just official projects, but shadow IT, individual tools, and embedded AI in purchased software. You can’t govern what you don’t know exists.

Days 31-60: Risk Assessment

For each AI application, ask: What decisions is it making? Who is affected? What could go wrong? What harm could result? Create a simple risk matrix: high/medium/low impact and high/medium/low likelihood. Your highest-risk applications need immediate governance attention.

Days 61-90: Establish Guardrails

Start with three concrete policies: (1) Human review requirements for high-stakes decisions, (2) Transparency standards: what must be disclosed to customers/employees when AI is used, (3) A clear escalation path when someone identifies a problem with an AI system.

Questions Every Executive Should Be Asking

This process must be dynamic and ongoing.

In your next leadership meeting, start asking these questions about any AI initiative:

What decision is this AI system making, and who is impacted?

If this system makes a mistake, what’s our worst-case scenario?

Can we explain how the system reached its decision in plain language?

Have we tested this system for bias across different demographic groups?

Is there human oversight, especially for high-stakes decisions?

What data are we using, and do we have the right to use it this way?

If a regulator or journalist asked how this works, could we answer confidently?

Does this align with our stated values and the trust our stakeholders place in us?

Notice what these questions have in common: none require a technical degree to ask or answer, and they are about judgment calls and accountability.

The Competitive Advantage of Getting This Right

Another question leadership always asks is: “What’s in it for me?”

I tell them that there is actual profit. Companies investing in AI ethics report 34% higher operating profit from AI.

Executives also highlight the top three benefits from investments in AI ethics: increased trust (61%), strengthened brand reputations (57%), and mitigated reputational risks (54%).

Governance should not depend on whether it boosts margins; it is necessary because harm is real, because automation amplifies existing inequities. However, organizations that build strong AI governance move faster by avoiding risk and being proactive instead of reactive. When you have transparent practices, customers trust you more, and when you have inclusive processes, you build products that actually work for everyone and reach bigger markets.

Thank youCristina!

Cristina’s four pillars — accountability, transparency, fairness, and oversight — aren’t abstract principles for a future compliance checklist. They’re the operational framework that separates leaders who govern AI from leaders who get governed by it. If your organization is deploying AI faster than it’s building the structures to manage it responsibly, that gap has your name on it.

Follow Cristina’s work at The Responsible AI Brief.

- Joel

Questions Leaders Are Asking

What is ethical AI governance and why does it matter for executives? Ethical AI governance is the set of policies, oversight structures, and accountability frameworks that ensure AI systems are deployed responsibly. It matters for executives because courts hold companies — not AI systems — liable for harm, as the Air Canada chatbot ruling demonstrated.

Who is responsible when AI makes a bad decision in my organization? You are. Courts have consistently rejected the argument that AI is a separate entity responsible for its own actions. The executive team bears legal and reputational responsibility for every AI system deployed under the organization’s name, including tools adopted without IT approval.

What are the four pillars of executive AI governance? The four pillars are accountability (clear ownership of AI systems), transparency (understanding how AI makes decisions), fairness (identifying and mitigating bias), and oversight (continuous monitoring with human judgment for high-stakes decisions). Together, they transform AI ethics from abstract principle into leadership competency.

Join 4,200+ Leaders Implementing AI today...

Which Sounds Like You?

“I need governance frameworks, not just AI tools” → Join Premium (Starting at $49/yr, $1,345+ value): Governance playbooks, accountability frameworks, and direct coaching access to build responsible AI into your leadership practice. Start here

“I need this built for my context” → AI governance audits, custom policy frameworks, and responsible deployment strategy for your team. Message me or book a free call

Leaders own the outcome, even when the system makes the decision.

The gap between investment and governance is the part that should worry boards most. A McKinsey survey found that only 28% of organisations say the CEO takes direct responsibility for AI governance oversight, and just 17% say their board does. Cristina’s point about it being a leadership competency rather than an IT problem is the reframe that most regulated industries need, because right now the people buying the tools and the people responsible for compliance are often in completely different conversations. Which of the four pillars do you see senior leaders finding hardest to action in practice?