Run This Red Team Exercise Before AI Fraud Hits Your Team

A five-step threat simulation framework for leadership teams

TL;DR: Scammers cloned Italy’s defense minister’s voice with AI and called the country’s top business leaders asking for wire transfers. Giorgio Armani got the call. So did Prada’s co-founder. One billionaire wired €1 million before anyone realized. Red team exercises, where your team rehearses these attacks before they happen, build the reflexes that no policy document ever will.

What happens when an AI clone of a defense minister’s voice calls a country’s most powerful business leaders and asks for emergency wire transfers?

One billionaire sent one million euros before anyone caught it.

Today’s guest is Chris, founder of ToxSec and an AI security engineer whose career spans NSA operations, Defence Contracts, and Amazon. He writes about the attacks already happening so teams can build real defenses.

Chris gives you the full story behind the Italy deepfake, plus a five-step red team framework your leadership team can run in 90 minutes. Don’t miss it.

In this post, you’ll learn:

How AI voice cloning was used to steal one million euros from one of Italy’s most prominent business leaders

Why traditional security awareness training fails against AI-powered social engineering

A five-step red team exercise framework you can run with your leadership team this month

How to handle the three most common objections when you propose threat simulations

I’ll let Chris take it from here.

Red Team Thinking Turns Leaders Into the Hardest Target in the Room

Adversarial threat simulations teach teams to spot AI-powered social engineering before it costs real money.

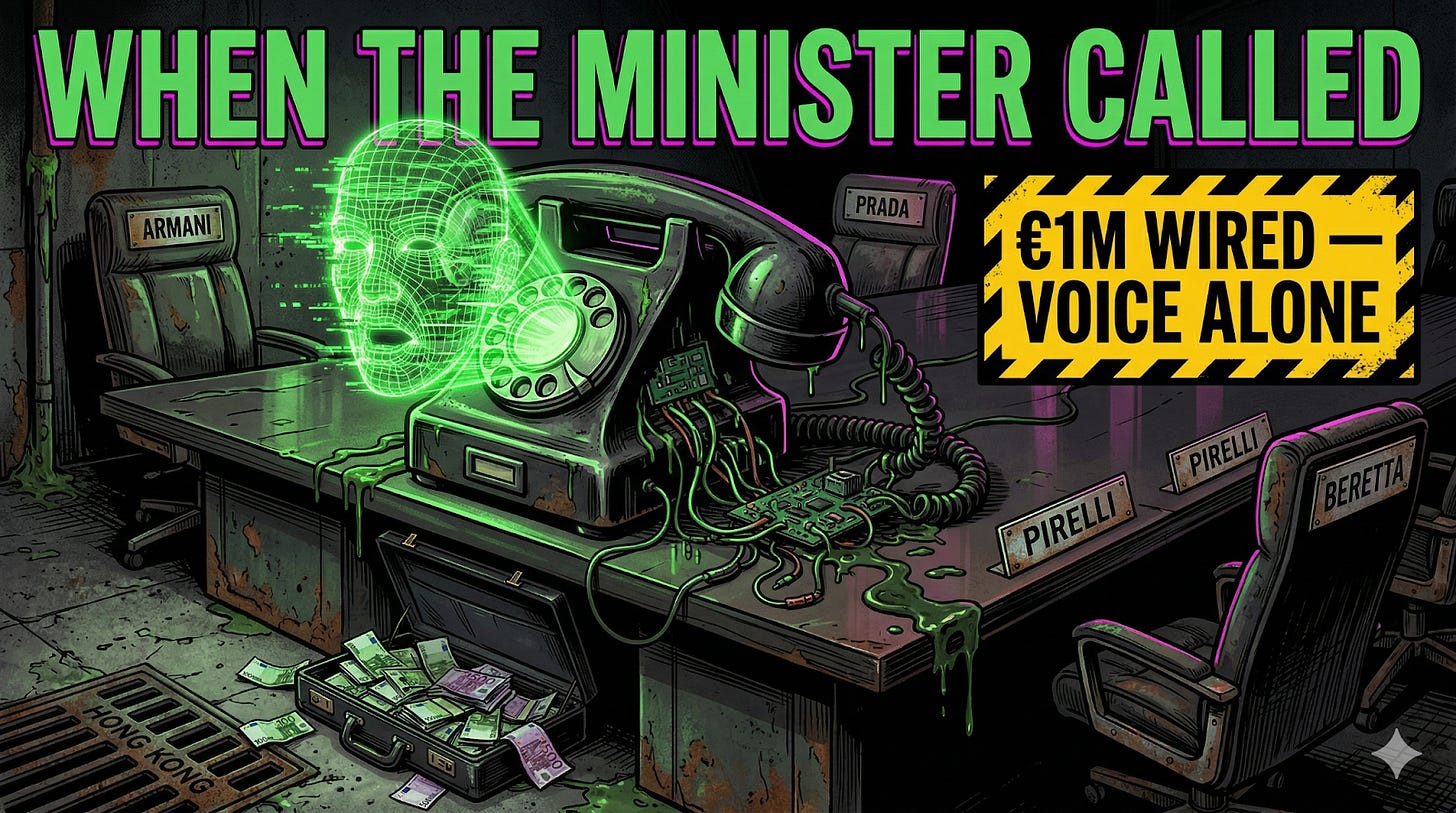

When Giorgio Armani’s Phone Rang, It Sounded Like the Government

In February 2025, some of Italy’s most powerful business leaders started receiving phone calls from what sounded like Defense Minister Guido Crosetto and his staff. The voice was convincing. The caller ID appeared legitimate. The story was urgent: Italian journalists had been kidnapped in the Middle East, and the government needed private funds wired to a Hong Kong bank account to secure their release. The money would be reimbursed by the Bank of Italy.

The targets were not junior employees. Giorgio Armani got the call. So did Prada co-founder Patrizio Bertelli, Pirelli executive Marco Tronchetti Provera, and the families behind Beretta firearms and Menarini pharmaceuticals. These are people who have entire teams filtering their communications.

Former Inter Milan owner Massimo Moratti wired approximately €1 million across two transfers before anyone caught it.

The voice on the phone was an AI-generated deepfake, a synthetic replica of the defense minister built from publicly available audio. The attackers never broke into a single system. They didn’t need to. They called people who trust their own ears and gave them a reason to act fast.

Italian police eventually traced the money to a Dutch bank account and froze it. But the attack itself worked exactly as designed: it exploited authority, urgency, and the biological fact that humans believe what they hear from a familiar voice.

Fortune reported in March 2026 that deepfake fraud drained $1.1 billion from U.S. corporate accounts in 2025 alone, tripling from the year before. Voice cloning fraud rose 680% in a single year. The annual security awareness quiz is not calibrated for this.

Why Reading About Threats Will Never Be Enough

Most organizations treat security awareness like a compliance checkbox. Once a year, everybody clicks through a module, answers a quiz about not sharing passwords, and goes back to work. The training evaporates in a week.

The problem is biological. Your brain stores information differently depending on how you encounter it. Reading about deepfake fraud is passive. Experiencing a simulated attack, feeling the pressure of a familiar voice making an urgent request, making a decision under stress, and then debriefing what went wrong: that burns the lesson into muscle memory. Pilots don’t learn to handle engine failures by reading about them. They sit in simulators and sweat through them.

Red teaming is the practice of assigning a group to simulate real attacks against your own organization. It started in military wargaming. Intelligence agencies and special operations units have used it for decades to pressure-test plans before the stakes are real. The concept is simple: if you want to find the holes in your defenses, hire someone to actually try breaking through them.

The good news for leaders who don’t run security teams? You don’t need a hacker on staff to get the benefit. The core skill, adversarial thinking, is trainable. And it applies to a lot more than cybersecurity.

What a Leadership Threat Simulation Actually Looks Like

Forget everything you’ve seen in movies about hackers in hoodies. A practical red team exercise for a leadership team looks more like a tabletop scenario: a structured, time-boxed session where you walk through a realistic attack and make decisions in real time.

Here’s a framework you can run with your team this month.

Pick a scenario that hits close to home. The Italy deepfake is a strong one for any team where leaders make decisions based on phone calls or video meetings. Other options: a vendor sends an urgent invoice with slightly different bank details (business email compromise), or a new AI tool an employee adopted on their own starts leaking client data to a third-party server (shadow AI, meaning software your team uses without IT approval).

Set the stage with pressure. The scenario needs to feel real. Give your team incomplete information, a ticking clock, and authority figures applying pressure. The Italian business leaders had all three: a voice they recognized, a patriotic cause, and urgency baked into every sentence. Simulate that friction.

Let them make the wrong call. This is the part most leaders skip because it feels uncomfortable. The whole point is to let the team experience failure in a safe environment. If everyone handles it perfectly, your scenario was too easy. Ratchet up the difficulty until someone gets fooled. Moratti is a billionaire with advisors and security staff, and he still wired the money. That’s the lesson.

Debrief without blame. The learning lives in the debrief, not the exercise. Ask three questions: What signals did we miss? Where did social pressure override individual judgment? What one process change would have caught this? Document the answers. These become your playbook.

Run it again in 90 days with a twist. Adversaries adapt. Your simulations should too. Change the attack vector. Swap the roles. The team that ran the voice clone scenario last quarter gets hit with a deepfake video call this quarter. Repetition with variation is what builds real pattern recognition.

The Resistance Is Predictable, and So Is the Fix

When you propose running threat simulations, you will hear three objections.

“We don’t have time.” The Italy attack was a phone call. A tabletop exercise takes 60 to 90 minutes. If your team can attend a quarterly all-hands, they can attend a quarterly threat sim. The ROI math practically does itself when you compare 90 minutes of prevention against a $1.1 billion industry-wide problem.

“That’s the security team’s job.” This is the objection that gets organizations burned. Attackers target people, not firewalls. The targets in Italy were CEOs and billionaire founders, not IT staff.

Your marketing lead approving a vendor invoice is an attack surface. Your executive assistant scheduling a call is an attack surface. Everyone who makes decisions based on trust is a target, which means everyone needs the training.

“Our team will feel like they’re being tested.” Good. Reframe it. Call it what it is: rehearsal time against threats that are already targeting organizations exactly like yours. Moratti built a business empire and ran one of Europe’s biggest football clubs. He still got fooled by a synthetic voice on the phone.

Competence and good intentions are not sufficient defenses against engineered deception.

The organizations that build adversarial thinking into their culture, not as a one-off event but as a recurring practice, develop something you cannot buy off the shelf. They develop institutional reflexes. When the real attack comes, and it will come, the team that has already rehearsed the pressure recognizes the pattern three seconds faster than the team that just read a policy document. Three seconds is often the entire margin between a near miss and a catastrophe.

Start small. Run one scenario. Debrief it with zero ego. Then do it again.

Thanks, Chris! ToxSec makes something clear that a lot of AI security content misses: the hard part of defending against these attacks isn’t technical, it’s human. Authority, urgency, and a familiar voice will bypass every policy document you’ve ever written. The only thing that builds real reflexes is practice under pressure.

If a billionaire with a full security team got fooled by a synthetic voice, your organization is not immune.

If You Only Remember This

AI-powered voice cloning fraud drained $1.1 billion from U.S. companies in 2025, and the targets are executives and decision-makers, not IT departments. If a billionaire with a full security team got fooled, your team is not exempt.

Red team exercises work because they put your team under simulated pressure, and the learning lives in the debrief, not the scenario itself. Ask three questions: What signals did we miss?

Where did social pressure override individual judgment?

What one process change would have caught this?

Run one 90-minute tabletop scenario this quarter, then run another in 90 days with a different attack vector. Repetition with variation builds the institutional reflexes that no policy document can.

What would your team do if someone who sounded exactly like your CEO called asking for an urgent wire transfer? Have you ever tested that?

Questions Leaders Are Asking

What is red teaming in a leadership context? Red teaming means assigning a group to simulate real attacks against your organization before actual attackers find the gaps. For leadership teams, this looks like tabletop exercises: realistic scenarios, decisions under pressure, and structured debriefs. You don’t need a cybersecurity background to run one.

How do AI deepfake voice scams actually work? Attackers clone a trusted person’s voice using publicly available audio like speeches or podcast appearances. They call targets, impersonate that person, and use urgency and authority to push fast action (usually a wire transfer). The technology is now cheap enough that small-time criminals have access to it.

How often should organizations run threat simulations? Every 90 days with a different attack scenario each time. Repetition with variation builds pattern recognition across multiple threat types. Adversaries adapt constantly, and your simulations need to keep pace. One exercise per year is a checkbox, not a defense.

Can small organizations benefit from red team exercises? Absolutely. A practical exercise is a 60 to 90-minute tabletop scenario, not a technical hack. Pick a realistic attack, give your team incomplete information and a ticking clock, let them make decisions, and debrief without blame. The debrief is where the real learning happens.

What is the biggest AI security threat facing leaders in 2026? Deepfake voice and video fraud. Fortune reported it drained $1.1 billion from U.S. corporate accounts in 2025, tripling year-over-year. Voice cloning fraud rose 680% in a single year. These attacks target trust and authority rather than technical systems, making every decision-maker a potential target.

Sources Referenced

Fortune, March 2026 — Deepfake fraud drained $1.1 billion from U.S. corporate accounts in 2025; voice cloning fraud rose 680% year-over-year

Italy deepfake defense minister incident, February 2025 — Widely covered by BBC, Reuters, AP

Thanks again to Joel for hosting this! As always, feel free to ask either one of us questions in the comments!

This crazy!!