Most People Use AI Like Google, Explorers Treat It Like a Lab

A look at the small group of people who treat AI like a system, not a shortcut

TL;DR: AI users fall into three categories: surface-level users who treat AI like search, builders who integrate AI into workflows, and explorers who study AI's patterns, quirks, and failures. Leor Gayr, author of Exploring ChatGPT, argues that explorer-mode thinking produces the deepest understanding of AI systems and the most transferable skills for leaders.

I’ve been thinking about this for a while, especially as I coach executives:

Why do some people get dramatically more out of AI than others, even when they’re using the same tools?

It’s not about the prompts. It’s not even about the use case. More and more, I realize that it’s about how they approach the system itself.

Leor Gayr runs Exploring ChatGPT, one of the most thoughtful AI newsletters I’ve come across. He doesn’t write “how to” content; he writes “what happens when” content, pushing AI into strange territory, studying the failures, and mapping patterns that most users never see. It’s a refreshing take on AI that I enjoy weekly and wanted to bring to each of you.

In this piece, he breaks AI users into three clear groups and makes a case that the third group, the explorers, are building something the other two aren’t.

Whether you’re a leader trying to understand what AI can really do or you’re already building with it, this reframe will change how you think about your own relationship with these tools.

Here’s Leor, in his traditional, poetic style. Enjoy!

AI Explorers: The People Going Down the Rabbit Hole

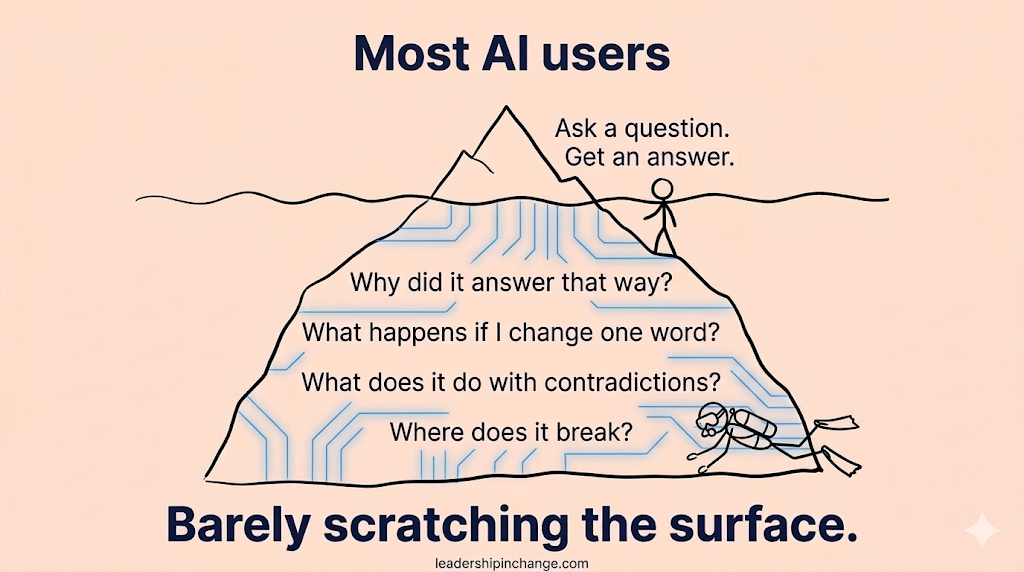

Most AI users are barely scratching the surface.

They ask a question.

They get an answer.

Maybe they generate some text, summarize an article, or help write an email.

That’s the surface layer.

But a small group of people is starting to interact with these systems very differently. Instead of treating AI like a faster Google, they’re studying how it behaves. They’re pushing it into weird situations, changing prompts, testing limits, and watching what happens.

They’re not just using AI.

They’re exploring it.

And the difference between those two things is bigger than most people realize.

Because once you stop treating AI like a tool and start treating it like a system, you begin to notice something strange.

These models have patterns.

They have quirks.

They fail in specific ways.

Sometimes the failures are more interesting than the successes.

The Three Ways People Are Using AI

Right now, AI usage falls into a few clear groups.

The vast majority of people are using AI the way they used search engines fifteen years ago. Faster answers. Quick explanations. Summaries. Writing help. It’s basically a convenience layer on top of normal work.

AI makes things easier, but it doesn’t fundamentally change how people think about the technology.

Then there’s a smaller group using AI more structurally. These are builders, developers, operators, founders, researchers. The people wiring AI into real systems. Workflows, automation pipelines, software tools, analytics loops.

For them, AI isn’t just useful.

It’s infrastructure.

But there’s an even smaller group that interacts with AI in a completely different way.

Explorers.

They aren’t trying to get the fastest answer. They’re trying to understand how the system behaves. They test edge cases. They push prompts into unusual territory. They look for patterns in how models reason and where those patterns break.

The question stops being “What can this do?”

It becomes “What exactly is this thing?”

What Makes an Explorer Different

The difference between explorers and everyone else is mostly mindset.

Most people treat AI like a vending machine.

You put a prompt in.

An answer comes out.

Explorers watch the machine instead.

They notice how responses change depending on wording. They test what happens when instructions conflict. They push the model into uncomfortable territory just to see how it reacts.

And when the system fails, they pay attention.

Because failures reveal structure.

Researchers studying large language models often learn more from edge cases than from successful outputs. Some of the most interesting discoveries about model reasoning have come from analyzing the exact moments when models break down (OpenAI 2023).

Explorers understand that.

Mistakes aren’t just errors.

They’re signals.

How Someone Actually Becomes an AI Explorer

You don’t need a computer science degree for this.

You mostly need curiosity.

Instead of only asking AI to do tasks, start asking questions about the system itself. Change how you phrase prompts and see what happens. Ask the same question in multiple ways and compare the reasoning.

Patterns start to appear.

Sometimes the model becomes more confident. Sometimes it contradicts itself. Sometimes it produces answers that seem correct but feel strangely shallow.

That’s not random.

Researchers studying human interaction with automated systems have shown that users often learn more about system behavior when something goes wrong rather than when everything works smoothly (Parasuraman and Riley 1997).

AI is no different.

Failures are often where the real structure shows up.

The Real Mindset Shift

At some point explorers realize something important.

AI doesn’t behave like normal software.

It behaves more like an environment.

Large language models generate behavior that wasn’t directly programmed. Some scientists describe these as emergent abilities that arise from scale rather than specific instructions (Wei et al. 2022).

That’s why exploration matters.

The people experimenting with these systems today, are often discovering patterns before the research community fully explains them.

This feels a lot like the early internet.

Back in the 1990s people were experimenting with websites and early online communities long before anyone understood how big the internet would become.

The people exploring deeply were the ones who saw what was coming first.

Something similar may be happening again.

And right now the frontier is still wide open.

References

OpenAI. (2023). GPT-4 Technical Report.

Parasuraman, R., & Riley, V. (1997). Humans and Automation: Use, Misuse, Disuse, Abuse. Human Factors.

Wei, J., et al. (2022). Emergent Abilities of Large Language Models. arXiv.

Thank you, Exploring ChatGPT!

Leor’s framing stuck with me…

The failures ARE more interesting than the successes. If you’re only using AI when it works, you’re missing the most valuable data.

Join 4,300+ leaders learning to think with AI, not just use it...

Which Sounds Like You?

“I need systems, not just ideas” -> Join Premium (Starting at $49/yr, $1,345+ value): Tested frameworks for going deeper with AI, not just prompts, but the explorer mindset Leor describes.

“I need this built for my context” -> Get AI coaching, custom Second Brain setup, strategy audits.

Questions Leaders Are Asking

Q: What’s the difference between using AI and exploring AI?

Using AI means asking questions and getting answers. Exploring AI means testing boundaries, studying patterns in how models respond, and paying attention to failures. The explorer treats AI as a system to understand, not just a tool to operate.

Q: Do I need to be technical to explore AI?

No. Exploring AI is about curiosity and observation, not coding. If you can notice when an AI response feels off, ask “why did it do that?” and try a different approach, you’re already exploring. The skill is attention, not engineering.

Q: How do AI explorers learn faster than regular users?

They learn from failures, not just successes. When AI gives a wrong or surprising answer, explorers investigate why rather than re-prompting. This builds a mental model of how the system actually works, which transfers across every AI tool.

Q: Should leaders spend time exploring AI or just focus on productivity?

Both, but most leaders skip the exploring entirely. Even 30 minutes a week pushing AI into unfamiliar territory builds judgment that no tutorial can teach. The leaders who understand AI’s limits make better decisions about where to deploy it.

PS: Many subscribers get their Premium membership reimbursed through their company’s professional development $. Use this template to request yours.

This framing is spot on.

The explorer mindset is what led us to build AOS — we spent months pushing models into failure states and realized the failures weren't bugs, they were architectural signals. That's what eventually became 146 patent filings for safe, Deterministic AI.

Great article about the current state of AI and visionaries.

The explorer idea resonates, but it also feels a bit idealized. In real work, you don’t always get to ‘explore’ the system. you’re usually trying to get something done with partial trust.

And most of the time people end up switching modes constantly without realizing it.