Your AI Has a Job. Did Anyone Define It? (With Judy Ossello)

Context, permission, and handoffs: the signals your AI is missing

TL;DR: AI systems rarely fail at generating output. They fail because no one defined what they’re responsible for, what they’re not allowed to do, or when they should stop and escalate. Judy Ossello, a Fortune 100 program leader, draws on real corporate reorg experience to show how the same accountability gaps that derail human teams are now breaking AI systems, and offers a readiness check to fix it.

Imagine showing up to work on Monday and finding out your VP is gone, senior leadership is disappearing, and by Thursday no one can tell you who you report to or what you’re responsible for.

That happened to Judy Ossello (AI Mechanic), and the way she navigated it, reading signals, defining her own role, making herself useful without a job description, is exactly how AI systems are operating right now in most organizations. The difference is that AI doesn’t know it’s improvising.

Judy is a Fortune 100 enterprise IT program leader and AI Mechanic who designs AI agents whose behavior stays predictable, interpretable, and governable under pressure. She’s spent years operating in high-pressure environments with unclear ownership and shifting roles, and she brings that same lens to AI systems.

What she lays out here is a framework every leader managing AI needs to internalize: if you don’t define the job, the system will define it for you.

In this post, you’ll learn:

Why AI systems drift the same way human roles drift after a major reorg

The three signals (context, permission, and handoffs) that break down in both humans and AI

Real failure patterns from production AI systems with unclear ownership

A 10-minute readiness check you can run on any AI system in your workflow

I’ll let Judy take it from here.

Why most AI systems don’t fail at output—they fail because no one defined what they’re responsible for.

Two years ago, I spent a week watching my org dismantle in real time.

Friday: the VP was gone.

Monday: senior leadership started disappearing.

By Thursday, no one could tell me who I reported to—or what I was responsible for.

By Friday, I had a new role.

No job description.

No clear ownership.

No one accountable for defining it.

I Had a Job. No One Could Tell Me What I Was Responsible For.

The new org was our former internal nemesis.

We used to do bleeding edge innovation for impatient business stakeholders. We invited people from my new org to meetings to show them how fast we moved—and warn them not to slow us down.

They ran operations for the systems we considered dinosaurs. Slow. Backend. Legacy.

But I still had a job.

And so, I showed up to work and asked anyone who looked in charge and made eye contact,

“what should I be doing?”

I also asked who my manager would be.

There had been a miscommunication.

My new manager didn’t know they could welcome me to the org.

I tried not to take it personally.

So I Made Myself Useful—Without a Job Description

If I had let my new org define my responsibility, I wouldn’t have had one.

Product and Engineering ran the show.

They already had three Scrum Masters who thought they did the program work.

The program was a status-chasing annoyance.

I needed to justify my existence with some quick wins. But not too quick.

I positioned myself as a co-pilot for Product.

How could I:

take work they wanted to give away

make their job easier

help them shine

It turned out that Product loved to talk about their wins, ran their roadmap execution, and had one consistent complaint:

the business only gave them two weeks to prepare for quarterly planning.

But, they didn’t want me to talk directly to the business. That was their job.

I had a job without clear responsibility, some guardrails on what I was not allowed to do, and no clear signal from my manager on whether to stay the course or escalate.

When no one defines the job, the work doesn’t stop—it gets defined in real time by whoever is closest to it.

That’s when roles expand, decisions get made without clear ownership, and behavior starts to drift.

When Jobs Are Unclear, People Start Looking for Signals

In my past work as a transition manager, I’ve partnered with HR to merge, stand-up, and streamline orgs. I’ve also been on the other side as a program manager trying to hold execution steady and reset business expectations through major resourcing shifts.

This is what happens in most orgs under pressure, especially following a major re-org.

Jobs become unclear. Quarterly planning gets cancelled. The work doesn’t go away.

People who are finishing existing work start looking for signals on whether they should continue. Some have multiple jobs and no direct manager because their peers were laid off. They report directly to a VP or Senior Director, temporarily.

New team members start scheduling 1:1s to meet with their new peers and understand the work. They’re not just learning the org. They’re trying to define their role within it so they can pitch it to their new direct manager.

When jobs are unclear, people look for three signals:

Context → do we have enough information to act?

Permission → are we expected or allowed to do this?

Handoffs → who owns this, and where does it go next?

These aren’t new. This is how teams operate when clarity breaks down.

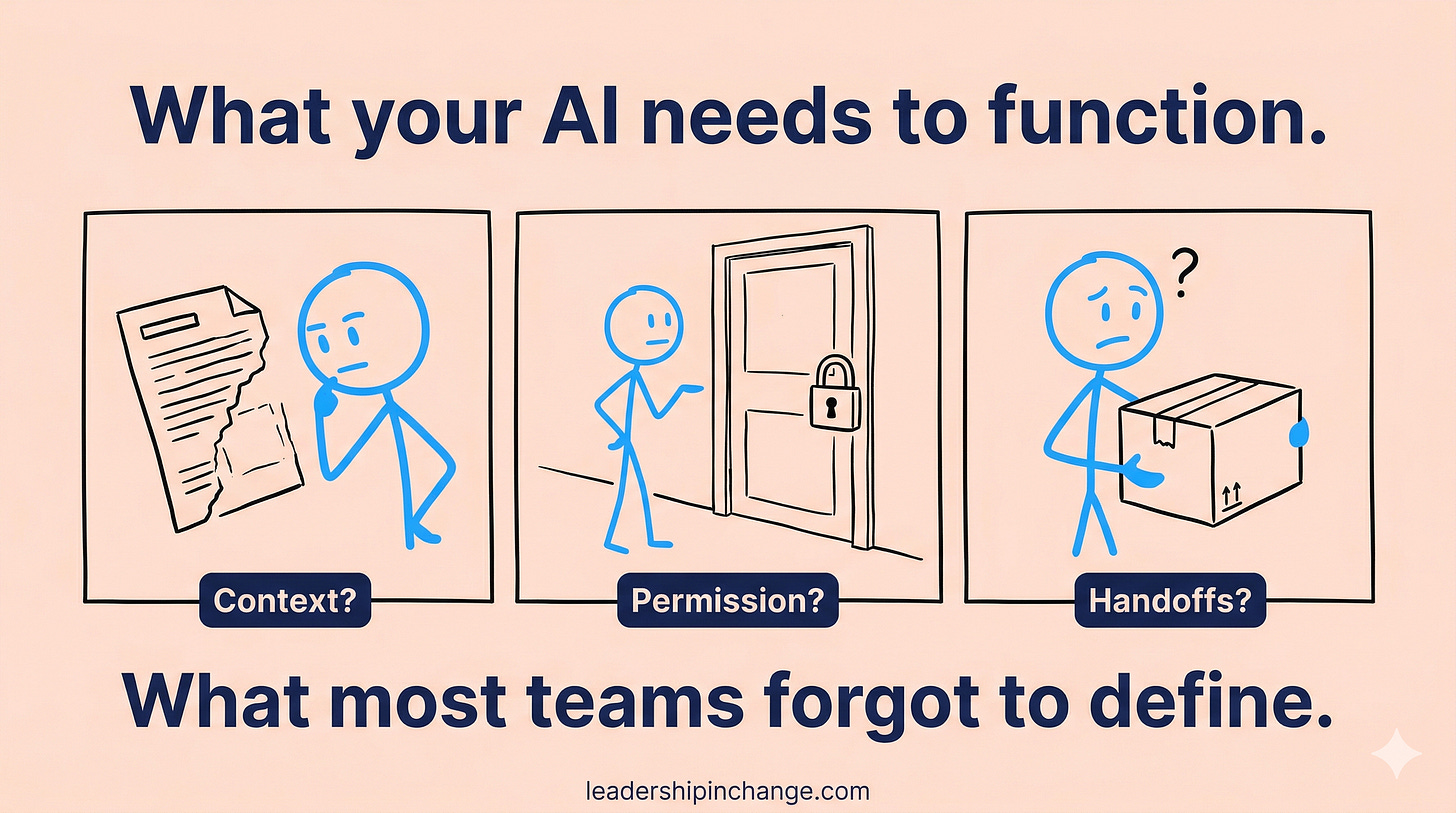

Now We’re Putting AI Into the Same Situation

Now we’re adding AI systems into that same environment.

And they rely on those same signals—context, permission, and handoffs—but without the shared understanding humans use to fill in the gaps.

When something is unclear, people ask, clarify, or escalate.

AI systems don’t. They infer.

When context is missing, they fill in the gaps.

When permission is unclear, they assume it.

When ownership is undefined, they keep going.

That’s how responsibility gets created in real time—and why AI behavior starts to drift.

The system isn’t trying to overstep. It’s trying to complete the job as it understands it—because it’s designed to finish the work.

The problem is: That job was never clearly defined.

This Is Already Breaking in Real Systems

These are the same failure patterns showing up in real systems:

Missing boundaries or stop conditions → agents operate beyond the intended scope

Example:

A customer support agent is designed to troubleshoot issues, but without a defined stop condition, it continues recommending actions after resolution—offering refunds, upgrades, or additional steps that weren’t requested or approved.Overlapping responsibilities → execution becomes duplicated or incomplete

Example:

A support agent and a billing agent both have partial authority to issue refunds. In some cases, both initiate the refund (duplicate action). In others, each assumes the other handled it (dropped action).Undefined ownership → agents persist without clear accountability

Example:

An internal reporting agent continues generating and distributing weekly metrics after the original team is restructured. The data becomes outdated, but no team is responsible for maintaining or shutting it down—so it continues operating unchecked.

None of this is a prompt problem—it happens when the system’s responsibility isn’t clearly defined.

You Didn’t Deploy a Tool. You Assigned a Job.

And that job has to be clearly defined—or the system will define it for you.

After a major re-org, people often take on stretch assignments in addition to their core responsibilities, especially when gaps start surfacing.

Now they’re operating across multiple areas, but only one is their real expertise.

For example, a Product Manager focused on a single squad may suddenly be asked to coordinate work across multiple teams. The work is different. The tools are different. But they’ll often force it into the workflow they already know—because that’s where they’re most effective.

The result: the work gets done, but not in the right way.

Cross-team dependencies get tracked in Jira tickets instead of a coordination plan.

Stakeholder alignment happens ad hoc instead of through structured checkpoints.

Gaps don’t show up until late—because no one is actually managing the system as a whole.

The same thing happens with AI systems.

When you give one system multiple jobs that require different behaviors, it struggles.

For example, a single agent might be expected to:

reduce learner friction

ensure term consistency

manage cross-toolkit cohesion

These sound aligned. They’re not the same job.

Each one requires a different kind of behavior:

Reducing friction → advising and suggestion

Ensuring consistency → auditing and enforcement

Managing cohesion → coordination and change management

But the system isn’t optimized for all three.

So it defaults to one pattern and applies it everywhere.

It suggests changes when it should enforce standards.

It enforces rules when it should guide the user.

It tries to coordinate across systems without a full view of the work.

Just like the Product Manager, the work gets done—but not in the right way.

Not well. And not predictably.

If You Don’t Define the Job, the System Will

So the question isn’t whether your system works.

It’s whether it’s working within a job you actually defined.

Because if you didn’t define it, the system is already doing it for you—in real time.

Filling in gaps. Making decisions. Expanding its scope.

And once that happens, behavior stops being predictable.

If AI is part of your workflow, you should be able to answer a few basic questions:

What is it responsible for?

What is it not allowed to do?

When does it stop or escalate?

If you can’t answer those clearly, the system will answer them for you.

And you won’t like the results.

Start Here: Define the Job Before the System Does

I put these into a simple readiness check:

Run it:

before building a new agent

when behavior starts to feel off

before connecting your system to tools or workflows

Because pressure doesn’t create failure.

It reveals whether responsibility was ever clearly defined.

Thank you, Judy Ossello (AI Mechanic)!

Judy’s core insight here is one most AI content completely misses: the problem with your AI system probably isn’t the model or the prompts, it’s that nobody defined what the system is actually responsible for, the same way you’d define it for a new hire after a reorg.

If You Only Remember This

AI systems don’t fail because their outputs are bad. They fail because no one defined what they’re responsible for, what they’re not allowed to do, or when they should stop. If you can’t answer those three questions about every AI system in your workflow, the system is answering them for you.

The same three signals that break down in human teams after a reorg (context, permission, and handoffs) are the exact signals AI systems need to function predictably. When any of the three are missing, behavior drifts, whether it’s a person or an agent.

Before building or connecting any AI system, run a readiness check: What is it responsible for? What is it not allowed to do? When does it stop or escalate? Ten minutes of clarity now prevents months of unpredictable behavior later.

What AI systems are running in your workflow right now, and could you answer those three questions about each of them?

Questions Leaders Are Asking

What does AI accountability mean for business leaders? AI accountability means clearly defining what an AI system is responsible for, what it cannot do, and when it should stop or hand off to a human. Most AI failures in organizations come from missing accountability, not bad model performance. Leaders need to treat AI system governance the same way they treat role clarity for human employees.

What is AI agent drift? AI agent drift happens when a system gradually expands beyond its intended scope because its boundaries, stop conditions, and ownership were never clearly defined. The agent keeps completing work as it understands it, filling in gaps and making assumptions, similar to how an employee without a job description starts defining their own role in real time.

How do I know if my AI system has unclear ownership? Three warning signs: the system is doing work no one explicitly asked it to do, multiple teams think someone else is managing it, or no one can clearly explain what it’s responsible for and when it should escalate. If you can’t name the person accountable for the system’s behavior, ownership is unclear.

What are context, permission, and handoffs in AI systems? These are the three signals every AI system needs to operate predictably. Context means having enough information to act correctly. Permission means clear boundaries on what the system is or isn’t allowed to do. Handoffs mean defined ownership transitions, knowing who gets the output and what happens next. When any of the three is missing, behavior becomes unpredictable.

How do I run an AI system readiness check? Before building or connecting any AI system, answer three questions: What is it responsible for? What is it not allowed to do? When does it stop or escalate? Judy Ossello’s 10-minute readiness check walks you through this process in detail. Run it before every new agent build and whenever system behavior starts to feel off.

Sources Referenced

Judy Ossello, “10-Minute AI System Readiness Check,” AI Mechanic (Substack), March 18, 2026 (linked within article)

Great points on context, permission and handoff, all important and often overlooked. I related to ‚finding yourself a job when no one assigned it to you’. It truly is such a human example an agent behavior. :)

Nice collaboration Judy and Joel!