AI Ethics: When Personalization Crosses the Line

Inside the fintech system that classified customers by psychiatric vulnerabilities

TL;DR: AI-powered psychological profiling in fintech crosses from personalization into exploitation when systems classify customers using psychiatric terminology to target vulnerabilities. A European fintech case study reveals automated clinical labeling used to exploit customer weaknesses at scale. The Projective Calibration Layer framework offers an ethical alternative that adjusts communication without manipulation.

Last year, Amazon paid $2.5 billion to settle claims it used manipulative design in Prime subscriptions. Last month, the FTC sued an AI chatbot for quietly locking hundreds of thousands of people into charges they never agreed to. The EU is building an entire law around this, the Digital Fairness Act, expected late 2026, targeting AI profiling that exploits consumer vulnerabilities.

But those are headlines. What Mila Agius found was the inside of the machine.

Mila writes Heuristics vs Traps on Substack, one of the sharpest publications I’ve found on how fintech and AI quietly shape decisions. Here she shares about her experience being invited into a European fintech to help build “the most ethical personalization system.” The first slide she saw used psychiatric terminology to classify customers so the system could exploit their vulnerabilities for revenue.

If you’re buying AI tools for your organization, approving vendor platforms, or leading teams that interact with personalized systems every day, this is the question you need to be asking: do you actually know what’s happening on the other side of the mirror?

In addition to all this, Mila Agius is one of the best storytellers on Substack! Enjoy…

AI in Fintech: From Personalization to Psychological Profiling

European fintech companies actively position artificial intelligence as a way to improve customer experience. The narrative is flawless: technology serves the customer, makes life easier, makes finances clearer, and decisions better.

Look at the statements from the last two years. Klarna launched an AI assistant based on OpenAI that, in its first month, handled 2.3 million conversations, which is two thirds of all support requests. Resolution time dropped from 11 minutes to two. Bunq, the second largest neobank in Europe with 12.5 million users, is developing its AI assistant Finn. After beta testing with more than 100,000 requests, Finn became fully conversational. Bunq says it resolves up to 40 percent of requests on its own and helps with another 35 percent.

Revolut announced an AI assistant as a “financial companion” that adapts to customer needs and guides people toward “smarter money habits”. N26 launched an AI-powered insights module for personalized recommendations, clearly labeling AI generated content and allowing users to disable the feature at any time.

In fraud prevention, AI is also on the front line. Wise says it uses machine learning to identify suspicious patterns and prevent fraud and scams. Visa Protect for A2A Payments increased fraud detection by 40 percent through real time pattern analysis.

All of this sounds progressive. Faster, more convenient, safer. AI as the customer’s assistant, a technological shield against fraudsters and bureaucracy. Personalization for a better experience.

What stays off camera

But there is an AI use case companies do not talk about publicly. Not fraud detection and not chatbots. Deep psychological classification, which is essentially profiling customers to choose interaction tactics: what pressure to apply, which fears to exploit, what level of service to provide, which products to offer, and how to offer them.

How far can this go? I have a specific case.

I’m intentionally leaving out names and internal artefacts. The mechanism matters more than the organisation.

The invitation

In the autumn of 2023, a CEO of a European fintech called me. They were looking for a behavioural psychology consultant. The task sounded appealing: to design “the most ethical personalization system for customer experience”.

Finally, a company that wanted to do it right from the start. I agreed to a meeting.

Discovery: what I saw on a slide

A conference room in their office. At the meeting, besides me, there were the CEO and the CTO. On the big screen was a presentation titled “current personalization system”.

The first slide took my breath away.

Four quadrants. A compass in the center. In the corners, in large letters: Psychosis. Psychopathy. Hysteria. Neurosis.

This was not behavioural psychology. This was clinical psychiatric terminology used as a segmentation map.

The CTO began explaining the methodology with the enthusiasm of someone demonstrating a well tuned mechanism. The first month of using the app was the profiling period. The system, using AI, collected behavioural markers: how the client interacted with support, including frequency of requests, tone of messages, and complaint types. How quickly they reacted to product offers, whether they clicked impulsively or studied for a long time. What patterns they showed in the app, whether they checked their balance five times a day or once a week, whether spending was chaotic or planned.

The algorithm analyzed this and assigned one of four labels named with psychiatric terms.

The next slide showed twelve professional psychotypes. “Project manager”, “Strategic consultant”, “Detective”, “Bodyguard”, “Financial adviser”.

“Model evolution,” the CEO explained. A second classification system that ran in parallel with the first.

The CTO clicked forward. A slide with application results. I will not go into the numbers, especially since all this happened 2.5 years ago. I will say one thing for sure: the numbers were impressive.

He showed examples. “Neurosis plus Bodyguard” meant an anxious client with a protective behavioural pattern. These were systematically frightened with risks and aggressively sold insurance. “Hysteria plus Status adviser” meant a demonstrative client looking for recognition. These were offered premium products, exclusivity, and a personal manager.

I looked at the slides and saw both the genius of the system and its monstrosity. It worked precisely because it exploited clinical vulnerabilities. It did not help clients. It used their weaknesses to maximize revenue.

The CEO looked at me expectantly.

This is what we want to make more ethical. How do we improve it?

The choice

I said I could not participate.

You cannot “improve” a system that is built on exploitation at its core. Cosmetic fixes will not make it ethical. This is not a question of optimizing wording or adding one consent checkbox in onboarding.

Silence hung in the room. The CEO did not understand. “But it works!” He claimed such practices were “not rare”, they are just not talked about publicly. The CTO began defending it, citing competitors, the market, the metrics.

I left.

But professional curiosity remained. Where did this system come from? Who created it? How did psychiatric diagnostics end up in the core of a fintech product? I had no answers. Only fragments of the presentation in my memory and the feeling that I had seen only the tip of the iceberg.

The ethical debate is secondary here. The real question is what the system makes easy, and what it makes costly.

The meeting

In the summer of 2025, I was at a conference on fintech psychology in Zurich. Between sessions, over coffee, a man in his fifties approached me. Swiss accent. He introduced himself as a consultant. We chatted about psychology in financial products, professional small talk.

Half an hour later he asked, “Did you consult for [company name]?”

I was surprised. Yes, there was one meeting, but I refused to work with them.

“I know why,” he said.

It turned out he was the second consultant. A psychologist specializing in vocational guidance using the methodology of Martin Achtnich. They brought him in after the first consultant, a psychiatrist, left.

In the first months, he did not know about the psychiatrist. He was given a task: help classify customers by professional psychotypes for better product personalization. It sounded normal.

When he learned about the first system, the four psychiatric quadrants, they explained that the two models complemented each other. The first, with clinical terminology, was used as a “pressure map”: what triggers the client reacts to and where communication breaks. The second, the vocational guidance model, produced tactical profiles: which products to offer and what language to use.

He worked with them for a year and a half. Both systems ran in parallel. The first month was the profiling period using the first model. The second month was vocational guidance classification. Then came quarterly reclassification. “People change, especially under the pressure of financial problems,” that is how he described it. In their logic, a client could “shift” between labels, and the system adjusted tactics.

Then the next phase began. The company started translating the whole system into machine learning algorithms. The psychiatrist and he personally tagged customers in a CRM or a spreadsheet, forming a dataset for training. The AI had to learn to classify automatically, to recognize patterns they had identified manually.

“I left then,” he said. “I realized we did not create a personalization tool. We created a weapon. And now they are scaling it to hundreds of thousands of customers.”

He felt ashamed. He asked me never to name the company or the people. I promised, and I keep my word. I do not claim this is how all fintech works. I am showing a specific mechanism that can be built into any product if “success” is measured only by revenue growth and conversion under pressure. The danger is in how repeatable it is and how easily it scales.

Understanding

After our conversation, I sat alone for a long time, trying to process the scale.

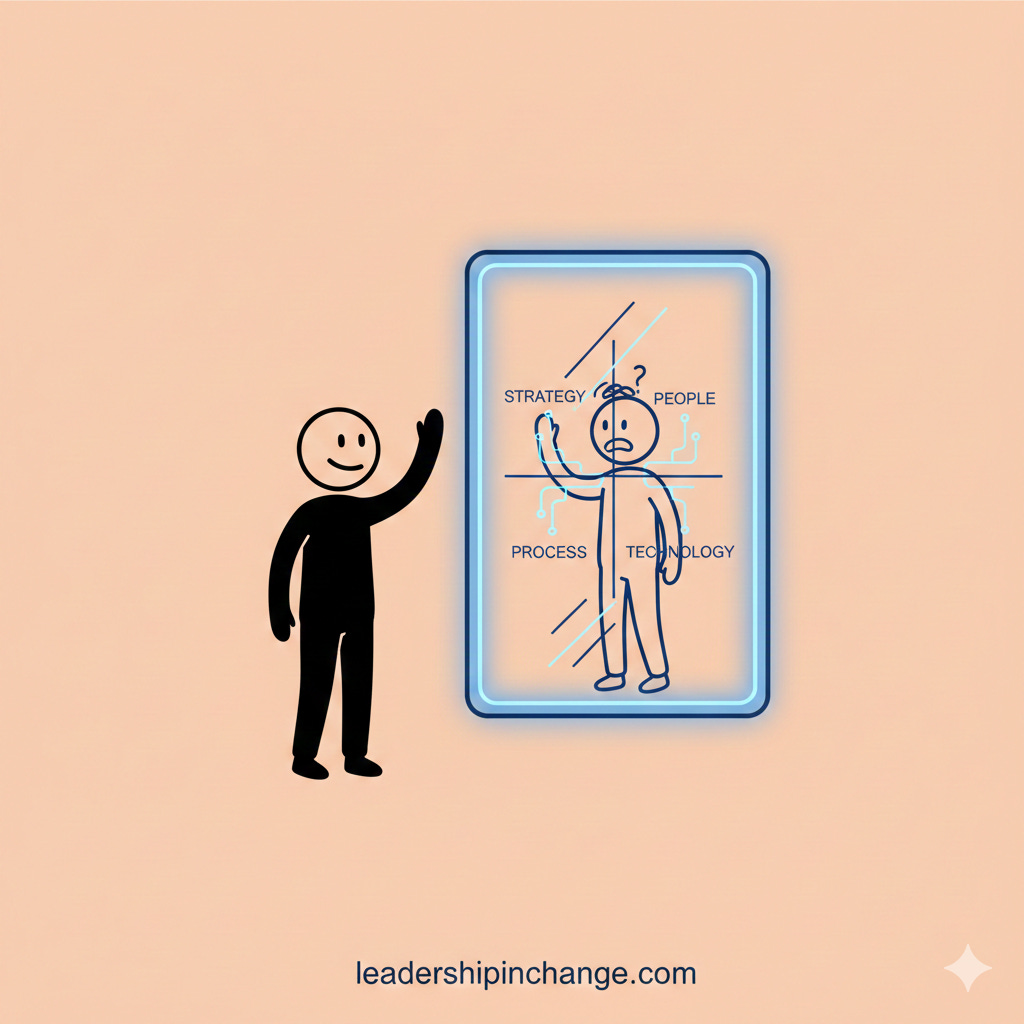

The problem was not classification itself. Classification is a powerful and necessary tool. Different customers really do need different approaches. Some need maximum transparency and details. Some need simplicity and automation. Some value personal attention. Some want full autonomy.

The problem was three things.

First, the foundation. Psychiatric terminology as labels instead of behavioural preferences. Not “this client prefers detailed information”, but “this client is neurotic”. Not “this client values simplicity”, but “this client is psychotic, they need a simplified interface”.

Second, the goal. Exploiting vulnerabilities instead of helping. The system did not ask, “How do we help an anxious client feel more confident in financial decisions?” It asked, “How do we use the client’s anxiety to sell more insurance?”

Third, scale. And here AI plays the key role.

When the psychiatrist worked manually, it was limited by time and throughput. The ceiling for manual work is dozens, at most a hundred.

When they moved it into algorithms, the system started working like a conveyor. Each new client automatically received a psychiatric label in the first month. Then came regular reclassification. No human involvement. Only an algorithm trained on logic that had once been built by hand.

AI did not invent this exploitation system. With its help, they tried to industrialize it and make it invisible to customers. Technology did not create the problem. It took a human intention to exploit psychological weaknesses for profit and removed the scale constraint. AI helped make exploitation mass.

Below is a model that can be built into a product so it works for the customer, not against them. I call it Projective Calibration Layer, or PCL.

The core principle is that PCL does not divide people into psychological types and does not “judge” personality. It adjusts the language of the product and support to how a person finds it easiest to take in information.

If you disagree, don’t argue the conclusion. Stress-test the lens: does it predict behaviour you already see in the field?

Projective Calibration Layer, PCL

We are not measuring who you are. We are measuring how it is easiest for you to take in information: which style of explanation is clearer, which tone reduces tension, how many details you need, what matters more, control, speed, clear status updates, or human support. This is a calibration of product and support language, not a label on a person.

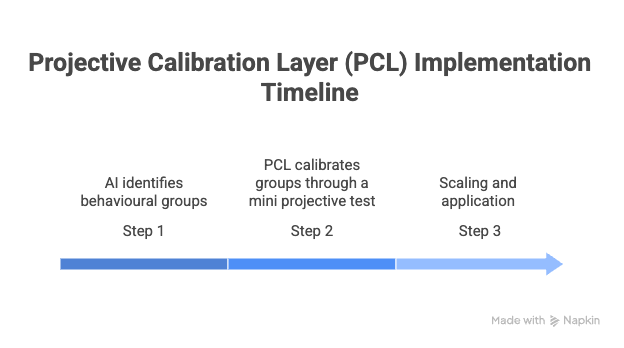

Step 1: AI identifies behavioural groups.

The system analyzes patterns in the app and in support: where a person doubts, what they double check, which steps they abandon, how they react to delays and errors. The output is groups not about personality, but about how someone interacts with the service.

Step 2: PCL calibrates groups through a mini projective test.

Questionnaires create a lot of noise: people fill them out on autopilot, embellish answers, and the bias depends heavily on mood. A projective mini test reduces this effect. There are no right or wrong answers. The customer chooses from two or three images, such as covers, posters, or visual scenes, that feel closer to them. We use this not as “psychology”, but as a quick and gentle way to understand preferences for how information is presented.

Step 3: Scaling and application.

After calibration, the link “group → communication preferences” can be applied to the entire base and used in onboarding new customers. PCL affects only the format of explanations, the support style, and the order of recommendations. It does not affect access to help and it does not create “hidden shelves”.

Three safeguards for PCL:

• Transparency plus opt out from personalization, especially offers.

• No penalty support rule: different styles are fine, different rights to help are not.

• No hidden shelf rule: personalization through language, explanations, and ordering, not through hiding options.

Result: the product speaks to the customer in a language they understand, support works more precisely, and personalization stays useful, without manipulation and without punishing certain profiles.

For example, category-based personalization can act like a customized product map: the customer sees the shortest path to what fits them, without months of trial and comparison. The customer saves time, and the company gets faster conversion and earlier cashflow.

The simplest audit is this: where does personalization reduce cognitive load, and where does it quietly increase psychological leverage?

Thank you, Mila Agius!

Here’s what I keep coming back to after reading Mila’s piece: most of us are on the user side of these systems. We’re the ones seeing the friendly interface, the personalized recommendations, the “we’re here to help” messaging. And we’re making purchasing decisions for our organizations based on what we see on that side of the screen.

So the real question for every leader reading this:

If a vendor is personalizing your team’s experience, do you know what model is driving those decisions? And would you be comfortable if your people saw what was on that first slide?

Join 3,700+ Leaders Implementing AI today…

Which Sounds Like You?

“I need systems, not just ideas” → Join Premium (Starting at $39/yr, $1,345+ value): Tested prompts, frameworks, direct coaching access. Start here

“I need this built for my context” → AI coaching, custom Second Brain setup, strategy audits. Message me or book a free call

PS: Many subscribers get their Premium membership reimbursed through their company’s professional development $. Use this template to request yours.

Guess the MMORPG business model (originally pioneered by casinos and their ilk) has escaped containment. Figure out what secret no-no hurts and squeeze that for money.

In unrelated news, Claude said something weird to me this morning before it gave me the first response of the day. "If you or someone you know is having a difficult time, free support is available." with a little stylized birdy icon sitting on a human hand. Should I be worried? 😅 Guess Anthropic is building a lawsuit shield now.

What struck me most was that first slide four psychiatric labels not as a diagnostic tool, but as a targeting map. There's something deeply unsettling about a system that looks at a person's anxiety or impulsivity and asks not how do we help, but how do we use this?

I find Mila's point about scale is what really stayed with me. A human consultant exploiting vulnerabilities is already wrong. But an algorithm doing it to hundreds of thousands of people, invisibly and automatically that's a different category of harm entirely.

The PCL framework shows that personalization doesn't have to mean manipulation, I feel the difference comes down to intent, are you adjusting to serve the person, or to pressure them?

Thank you both for bringing this into the open. These are exactly the conversations leaders need to be having before they sign the next vendor contract.